A leading COVID-19 modeller answers our questions

Dr Sheetal Silal is leading efforts to model COVID-19 in South Africa. Image: S. Silal

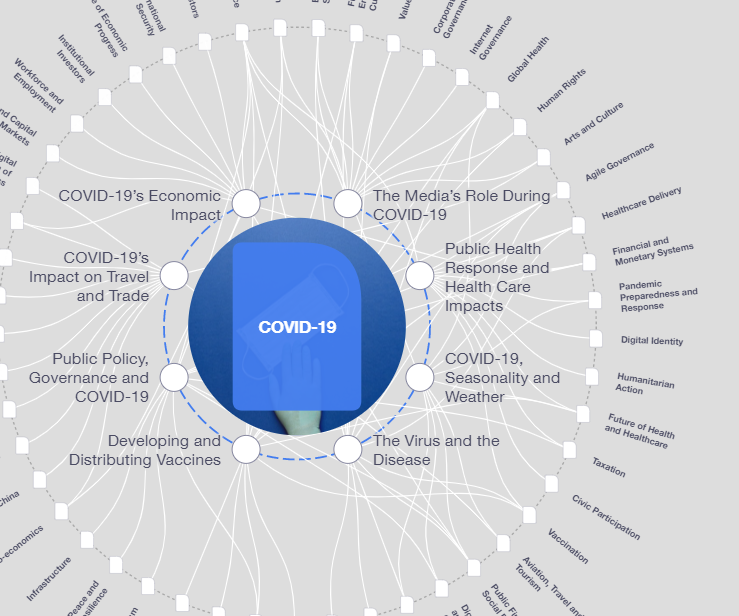

Explore and monitor how COVID-19 is affecting economies, industries and global issues

Get involved with our crowdsourced digital platform to deliver impact at scale

Stay up to date:

COVID-19

- Mathematical disease modelling is not a crystal ball - but it is a useful tool.

- Models tend to become more accurate as time passes and more data become available but there are factors they cannot always account for.

- Transparency and public scrutiny of modelling are needed to build trust between decision-makers and the public which is an important component of pandemic response.

A senior lecturer at the University of Cape Town, Dr Sheetal Silal has spent her career developing and using mathematical models to understand the spread of infectious diseases and how public health policies can most effectively control them.

It is therefore not surprising that when the South African government decided to enlist expert support to inform the country’s response to the COVID-19 pandemic, Dr Silal was one of the first experts they turned to.

Since March this year, she and colleagues in the South African COVID-19 Modelling Consortium have been using all the tools at their disposal to investigate how the disease might progress in the country and how each of these many scenarios would affect the population’s health.

We recently caught up with her to learn more about the work of modelling infectious diseases, the care needed to correctly interpret and communicate projections, and her hopes for how the field can continue to help improve the response to and management of COVID-19.

Thank you so much for taking the time to speak with us Dr Silal. Let’s start with the basics of how you build an infectious disease model - what sort of data sources do you need?

A multi-disciplinary approach is the foundation for determining what goes into a model. The first step is always to consult widely - clinicians, intensivists, economists, biologists, epidemiologists, public health specialists et cetera. By consulting experts from different fields we can build a more complete picture of how the disease behaves and is being treated. To me this is a real benefit of mathematical modelling – we see ourselves as the synthesizers of information.

To be more specific about the kinds of information that we use, it’s data on the virus and the disease it causes – infectivity, recovery time, mortality rate and so on; the behaviour of people – how strictly do (or can) they observe physical distancing and lockdown measures; the capacity of the healthcare system – how many intensive care units (ICUs) are there, how many nurses and so on. We combine these data in ways that show how the virus could spread, what effect that would have on health infrastructure, and the potential impact of intervention strategies.

As we become more confident in the validity of our model – this happens as our predictions are validated by real world data or as the body of scientific evidence our models are based on grows and strengthens - it’s possible to add more detail, such as variations according to a country’s regions and sub-regions, or the effect of existing health burdens like HIV and TB. We can also test hypotheses for ideas that are not yet well understood to see whether modelling suggests they’re worth investigating further.

With a new disease like COVID-19 is it challenging to get enough data?

The key has been to design our models so that they can quickly be updated to accommodate new information as it becomes available. Some details were well known before the pandemic, the number of ICU beds for example, but with a completely new disease and unprecedented lockdown policies there were a huge number of unknowns, which was a challenge. While we’ve learned a lot in the past months, we remain ready to adjust model parameters as more data emerges.

After synthesizing the data and running it through the model we can go back to the team of experts we consulted to show them the output and ask, “Does this make sense? What should be revised?”. In this way it’s an iterative process with benefits running in both directions. For example, modelling was one of the tools used to ascertain presymptomatic transmission – where an infected person can infect others before developing any symptoms themselves – because the models couldn’t account for the number of confirmed cases if assuming the virus is only being transmitted via those with symptoms.

There were many countries already suffering badly from COVID-19 before the disease began to spread widely in South Africa. To what extent can other countries’ data help to inform your models?

It’s an interesting question and one that we’ve thought a lot about. The key to whether this works is how similar or dissimilar the countries are. Often small differences can lead to quite divergent results. I’ll give you some examples that are disease-related and health system-related.

Looking at COVID-19 we know that severity of illness and transmission are affected by age. Many African countries have far younger populations than European countries. Therefore, the spread of infection, its severity and fatality rate should not necessarily be transplanted from European data into an African context without first taking age into account.

Another example is testing. Countries are employing different testing strategies for different reasons. In a country with enough resources to perform mass testing, they can get a reliable measure of the virus’ presence and how that varies geographically. Countries with limited resources may prioritize testing only for the elderly, the severely ill, or contacts of positive cases. Because of these differences in approach, it’s very difficult to say, “European countries have followed a certain increasing case trajectory, so we should expect the same to happen in our country.” because the numbers all mean different things.

So, while it is useful to study what is going on in other countries, one cannot transplant trends of an epidemic from one country to another without interrogating how the contexts differ.

I know there are tech firms out there that disagree with me, but I do not believe you can precisely capture human emotion and behaviour into an equation, least of all in an unprecedented situation such as the current pandemic.

”What are some other examples of where you as a modeller need to be especially careful to ensure you’re interpreting the data correctly?

I would argue that this is true for every data source going into a model but there are some that give us more trouble than others. These can roughly be divided into two main kinds of “blind spots”: those that exist now but can be corrected in the future when more data is available, and those that can almost never be accounted for.

An example of the first are the current questions around inherent immunity in populations. Are cross-immunity from other coronaviruses or other mechanisms contributing to the lower infection rates of some countries? For now, we can include these ideas in our models as hypotheses via specific scenario testing – if inherent immunity exists in X per cent of the population, how does the spread of the virus or load on hospitals change? But I should emphasise that this sort of scenario testing is done to build up a more complete picture of the range of possible futures rather than to inform policy decisions. These must be based far more on information for which clear data exist.

An example of the other kind of blindspot is human behaviour. I know there are tech firms out there that disagree with me, but I do not believe you can precisely capture human emotion and behaviour into an equation, least of all in an unprecedented situation such as the current pandemic. This is for several reasons: from the randomness early in the outbreak – super-spreader events often come down to chance for example – to the divergence we are seeing in populations’ adherence to lockdown measures. We try to account for this and run multiple scenarios, but it remains one of the largest unknowns.

Can you say a bit more about how people seem to be responding differently to lockdowns?

Sure, it’s naturally still an evolving situation but it seems that countries that went into a lockdown later, after the virus was well seeded in the population, have seen closer adherence to government guidelines to remain at home. This could be attributed to an emotional response to people in those countries being more likely to have friends or family members falling ill or dying. Fear of the virus was perhaps, a strong motivator to stay at home.

On the other hand, if you started lockdown early with a focus on containing the spread as much as possible then the population may not take it as seriously because the effects of the virus were never fully seen. This is the widely discussed ‘paradox of preparation’ where the only way to get ahead of the curve is to make decisions that at the time (and in retrospect) seem like over-reactions.

Once you have gathered all the necessary sources of data, and built the model – how do you use it to help inform the government’s response?

The first thing to say here is that mathematical disease modelling is not a crystal ball. It doesn’t tell you the future but rather is a tool that helps to understand the range of possible futures, given a set of assumptions. Our purpose is to provide this information to government in a way that is useful when making policy decisions.

It’s quite clear to me, especially in the context of South Africa as a low- and middle-income country (LMIC) that these policy decisions cannot be purely health-based, or purely model-based. They must integrate economic and social factors as well.

No government has an unlimited health budget and particularly in LMICs it’s important to consider that any money spent towards tackling COVID-19 is then not available for other – worthy - aims. Governments have tough calls to make when trying to balance all this out, and we as modellers are doing our best to ensure they are well informed about the health side of this equation.

Is there anything to be said for trying to integrate social and economic impacts directly in your health-focused modelling?

It’s possible, we do this for other established diseases, and I’m sure there are groups around the world doing this for COVID-19 but the irony is that for countries where the socio-economic impacts of health policies like lockdowns may matter the most – LMICs – there is often not any or enough data for these impacts to be usefully included. Combining this with how much we still have to learn about COVID-19, I think a good approach is to focus on the disease and complement the health-focused models by working with economists on cost-effectiveness and macroeconomic analyses, and social scientists on traditional methods of information collection such as surveys, interviews, etc. – to factor in the socio-economic impacts.

What’s your assessment of the general public’s response to your work – is the role of modelling well understood and communicated?

In the last three weeks or so since we released our reports to the public, there has been a lot of interest in the results and methods used. It’s very clear that government transparency is a common factor in the response of countries that have managed the virus well so far, so I definitely agree that it is in the public interest to have our work seen and scrutinised.

On the other hand, not everyone is a disease modeller and presented out of context, forecasts of the number of infections to come or burden on hospitals can contribute to the stress many people are experiencing currently. With this being the first time we’re seeing major public interest in the field, we see it as our responsibility to work especially hard to communicate how models should be interpreted – that each forecast comes with known uncertainties and caveats, that this is an iterative process undergoing near constant revision as more data come to light and that understanding models require more nuance than newspaper headlines often permit. We feel that this is going well so far and are grateful to many of the media outlets for taking a measured approach to reporting on our modelling work.

Looking to the future, how is the experience of responding to COVID-19 changing the field of infectious disease modelling?

Something that emerged quickly was the formation of many new international collaborations and networks. These are welcomed and I hope they continue even after we have overcome the pandemic as they help us to distribute information and techniques. Should another outbreak occur they will also help us respond more effectively in the future. Being in the public eye has also brought a lot of reflection over our responsibility to engage with the public and I hope the efforts that have started in this area also continue.

In terms of the work of disease modelling itself, this field has been developing for over 100 years and despite COVID-19 being a shock to almost every system, it hasn’t changed the science behind our work. There will certainly be new insights generated but these will be evolutions of the field rather than revolutions.

A positive consequence of the pandemic is renewed interest in and a rise in popularity of mathematical disease modelling. In my travels throughout the African continent, I have found that we have very talented mathematicians and students of mathematics. In the last few months, I have received numerous requests for postgraduate study in the field. This talent needs to be harnessed and channelled through funding and research projects to support our continent’s governments to control the diseases that continue to devastate our people and to respond locally and effectively to future outbreaks.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Related topics:

The Agenda Weekly

A weekly update of the most important issues driving the global agenda

You can unsubscribe at any time using the link in our emails. For more details, review our privacy policy.

More on COVID-19See all

Charlotte Edmond

January 8, 2024

Charlotte Edmond

October 11, 2023

Douglas Broom

August 8, 2023

Simon Nicholas Williams

May 9, 2023

Philip Clarke, Jack Pollard and Mara Violato

April 17, 2023