What this Nobel Prize-winning breakthrough means for you

Image: Dr Claude Bagnis, head of the molecular haematology lab at the Etablissement Francais du Sang (French Blood Institution) examines cells by fluorescence with an electron microscope in his laboratory in Marseille. REUTERS/Jean-Paul Pelissier

William E. Moerner

Harry S. Mosher Professor in Chemistry, Department of Chemistry, Stanford University

Get involved with our crowdsourced digital platform to deliver impact at scale

Stay up to date:

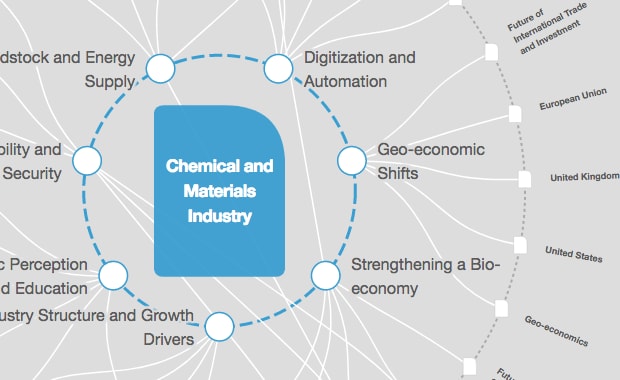

Chemical and Advanced Materials

In 2014, along with Eric Betzig and Stefan Hell, I received the Nobel Prize in Chemistry for something called super-resolution fluorescence microscopy. It sounds like a bit of a mouthful, but let me explain why it matters to you more than you might think – and what our path to the breakthrough reveals about the nature of scientific research today.

Good things come in small packages

Before microscopes, the smallest thing we could see was about the width of a human hair. Their invention literally opened up whole new worlds for scientific research. But there were still limits. Almost everything in our world is made of molecules. And molecules are tiny – just a few nanometres in size. They are so small that when viewed through a microscope, the resulting image looks blurry and out of focus, no matter how expensive the microscope, due to the “diffraction limit” of light.

What exactly is a diffraction limit? When scientists are trying to observe something, we’ll often take fluorescent (light-emitting) molecules and attach them only to the structure of interest, because that way the light we see only comes from the object we want to image. But here’s the problem: the wavelength of visible light is roughly 500 nanometers, which in the case of molecules, is much bigger than the structures we want to see. For microscopes, this means that an image of a single 1-nanometer-sized emitting molecule looks far, far larger. In fact, it looks like something that is 250 nanometers in size!

What this meant was that when we attempted to view molecules or structures of only a few nanometres in size using microscopes, we got very fuzzy pictures because the fuzzballs from the molecules would all overlap. That was a problem. The main goal of science is to understand how things work. For example, in cell biology, we want to understand in every exquisite detail exactly how the tiny “nanomachines” inside cells work. If we learn more about how normal cells work, we can apply the same methods to diseased cells, and this can ultimately help us correct things when they go wrong. But how can we do that if we can’t even see the things we want to study? This problem has plagued optical microscopy since its beginnings.

Turning microscopes into nanoscopes

In 1989, in my laboratory at IBM Research, my postdoc Lothar Kador and I were the first to detect single molecules optically. Our first experiment was fairly esoteric in that it required extremely low temperatures near absolute zero, and we were basically exploring the ultimate single-molecule limit because it had not been done before. But we realized our work was going to be very important when we observed that these molecules were doing some amazing things. Individual molecules would jump from one colour or wavelength to another! Or they would turn on and off, and blink in interesting ways.

It is because molecules do this – have an individual on/off behaviour – that we were able to start chipping away at a long-held belief in the scientific community: that we would never be able to observe things smaller than around half the wavelength of light. Microscopes could now become nanoscopes.

A solution: think fireflies

So how did this initial discovery pave the way for nanoscopes that could bring even tiny molecules into sharp focus?

Because, as scientists like Eric Betzig and others realized in 2005, it meant that we could remove the fuzziness by choosing emitting molecules that had two states: an “on” state where light is emitted, and an “off” state, where light is not emitted, and most importantly, image them at different times. So imagine we place our molecules along the structure we want to image, and we only turn a few “on” at any time. We then film them. In the different frames of the movie, the sample looks like stars in the sky, and because the different spots are separated, we can determine the position of each very precisely. Over time, we accumulate a long list of the positions of the molecules, and then we use computer graphics to show them all at once, like pointillism in art. The structure we wanted to see is now much sharper than was possible before.

As an approximate analogy, suppose you wanted to see the branches of a tree, but it is dark. What you could do is place fireflies along the branches of the tree, then just let them blink randomly on and off. Every time you see one turn on, you carefully measure its exact position. Even if you used an out-of-focus camera, you can still find the centre of each spot from each firefly. Then you take all the positions you found, show them all at once, and the branches appear!

Exploring the unexplored

The implications of this breakthrough are enormous: now, the focus of microscopes can be improved by five times, 10 times, even 100 times, so that extremely tiny structures can be observed. Scientists have already used the technology to see cell structures that were never visible before, from microtubules to protein aggregates that occur in Parkinson’s, Alzheimer’s and Huntington’s diseases. Much remains to be done to refine this idea and apply it in fields ranging from biology to materials science.

I had no idea, back in 1989, that the work I was doing would eventually lead to such a breakthrough that would open up whole new research areas. But without it – and the funding that supports this type of “what if” basic research – we would not be where we are today. Exploring the unexplored can lead to life-changing applications that cannot be predicted at the start.

Author: William E. Moerner is a Professor in Chemistry in the Department of Chemistry at Stanford University; he won the 2014 Nobel Prize in Chemistry. He is participating in the World Economic Forum’s Annual Meeting in Davos.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Related topics:

The Agenda Weekly

A weekly update of the most important issues driving the global agenda

You can unsubscribe at any time using the link in our emails. For more details, review our privacy policy.

More on Industries in DepthSee all

Robin Pomeroy

April 25, 2024

Daniel Boero Vargas and Mandy Chan

April 25, 2024

Abhay Pareek and Drishti Kumar

April 23, 2024

Charlotte Edmond

April 11, 2024

Victoria Masterson

April 5, 2024

Douglas Broom

April 3, 2024