Could AI make doctors obsolete?

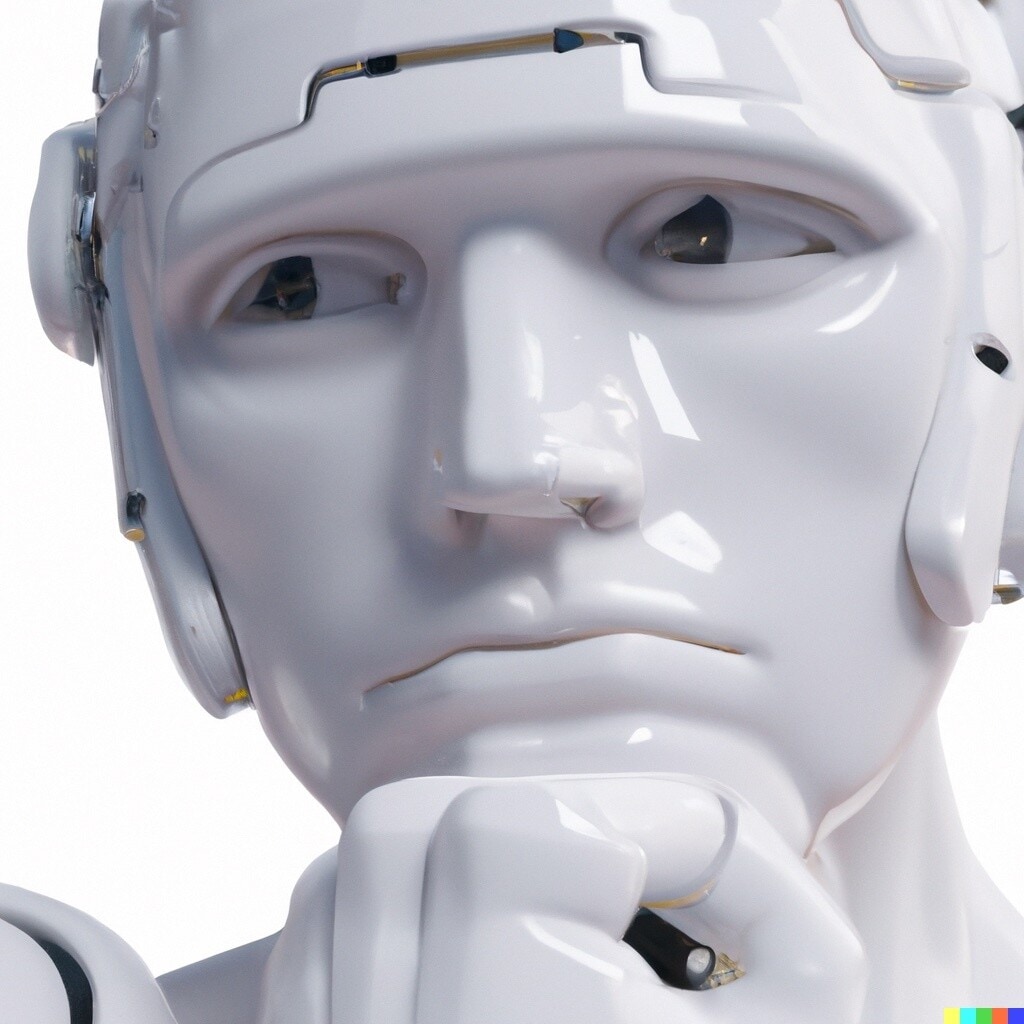

Health and disease are strongly influenced by emotional, subjective and social factors. Image: REUTERS/Ralph Orlowski

Get involved with our crowdsourced digital platform to deliver impact at scale

Stay up to date:

Artificial Intelligence

No, says Vanessa Rampton, because health and disease are strongly influenced by emotional, subjective and social factors that for machines are difficult to access.

Machines will increasingly be able to perform tasks that were previously the prerogative of human doctors, including diagnosis, treatment, and prognosis. Although they will augment the capacities of physicians, machines will never replace them entirely. In particular, physicians will remain better at dealing with the patient as a whole person, which involves knowledge of social relationships and normativity. As the Harvard professor Francis Peabody observed in 1927, the task of the doctor is to transform "that case of mitral stenosis in the second bed on the left" into the complex problem of "Henry Jones, lying awake nights while he worries about his wife and children."1

Humans can complete this transformation because they can relate to the patient as a fellow person and can gain holistic knowledge of the patient’s illness as related to his or her life. Such knowledge involves ideals such as trust, respect, courage, and responsibility that are not easily accessible to machines.

Illness is an ill-defined problem

Technical knowledge cannot entirely describe the sickness situation of any single patient. A deliberative patient-physician relationship characterised by associative and lateral thinking is important for healing, particularly for complex conditions and when there is a high risk of adverse effects, because individual patients’ preferences differ.2 There are no algorithms for such situations, which change depending on emotions, non-verbal communication, values, personal preferences, prevailing social circumstances, and so on. Those working at the cutting edge of AI in medicine acknowledge that AI approaches are not designed to replace human doctors entirely.3

The use of AI in medicine, predicated on the belief that symptoms are measurable, reaches its limits when confronted with the emotional, social, and non-quantifiable factors that contribute to illness. These factors are important: symptoms with no identified physiological cause are the fifth most common reason US patients visit doctors.4 Questions like “Why me?” and “Why now?” matter to patients: contributions from narrative ethics show that patients benefit when physicians can interpret the meaning they ascribe to different aspects of their lives.5 It can be crucial for patients to feel that they have been heard by someone who understands the seriousness of the problem and whom they can trust.6

"Coping with illness often does not include curing illness, and here doctors are irreplaceable." - Vanessa Rampton

Linked to this is a more fundamental insight: as Peabody put it, healing illness requires far more than “healing specific body parts.”1 By definition illness has a subjective aspect that cannot be “cured” by a technological intervention independently of its human context.7 Curing an organism from a disease is not the same as establishing its health, as health refers to a complex state of affairs that includes individual experience: being healthy implies feeling healthy. Robots cannot understand our concern with relating illness to the task of living a life, which is related to the human context and subjective factors of disease.

Medicine is an art

Throughout history, the therapeutic effect of doctor-patient relationships has been acknowledged, irrespective of any treatment prescribed.8 This is because the physician-patient relationship is a relationship between mortal beings vulnerable to illness and death. Computers aren’t able to care for patients in the sense of showing devotion or concern for the other as a person, because they are not people and do not care about anything.

Sophisticated robots might show empathy as a matter of form, just as humans might behave nicely in social situations yet remain emotionally disengaged because they are only performing a social role.9 But concern – like caring and respect – is a behaviour exhibited by a person who shares common ground with another person. Such relationships can be illustrated by friendship: B cannot be a friend of A if A is not a friend of B’s.10

A likely future scenario will be AI systems augmenting knowledge production and processing, and doctors helping patients find an equilibrium that acknowledges the limitations of the human condition, something that is inaccessible to AI. Coping with illness often does not include curing illness, and here doctors are irreplaceable.

References

1 Peabody F: The Care of the Patient. JAMA 1927, 88: 878, doi: 10.1001/jama.1927.02680380001001

2 Katz J: The Silent World of Doctor and Patient. Yale University Press 2002.

3 Interview with Joachim Buhmann. Ich fühle mich von künstlicher intelligenz überhaupt nicht bedroht. Forbes, 9. Februar 2017

4 Creed F, Henningsen P, Fink P: Medically unexplained symptoms, somatisation and bodily distress. Developing better clinical services. Cambridge University Press 2011

5 Fioretti C, Mazzocco K, Riva S, Oliveri S, Masiero M, Pravettoni G: Research studies on patients’ illness experience using the Narrative Medicine approach: a systematic review. BMJ Open 2016, 6: e011220, doi: 10.1136/bmjopen-2016-011220

6 Gawande A: Tell me where it hurts. New Yorker, 23. Januar 2018, 23: 36

7 Hofmann B: Disease, illness, and sickness. In: Solomon M, Simon JR, Kincaid H, eds. The Routledge Companion to Philosophy of Medicine 2017: 16

8 Di Blasi Z, Harkness E, Ernst E, Georgiou A, Kleijnen J: Influence of context effects on health outcomes: a systematic review. Lancet 2001, 357: 757, doi: 10.1016/S0140-6736(00)04169-6

9 Wingert L: Unsere Moral und die humane Lebensform. In: Sturma D, ed. Ethik und Natur. Suhrkamp 2019

10 Wingert L: Gemeinsinn und Moral: Grundzüge einer intersubjektivistischen Moralkonzeption. Suhrkamp 1993

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

The Agenda Weekly

A weekly update of the most important issues driving the global agenda

You can unsubscribe at any time using the link in our emails. For more details, review our privacy policy.

More on Emerging TechnologiesSee all

Thomas Beckley and Ross Genovese

April 25, 2024

Robin Pomeroy

April 25, 2024

Beena Ammanath

April 25, 2024

Vincenzo Ventricelli

April 25, 2024

Muath Alduhishy

April 25, 2024

Agustina Callegari and Daniel Dobrygowski

April 24, 2024