Technology Convergence: The New Logic for Competitive Advantage

Section 2: From combinations to convergence dynamics

Technology convergence is creating the same outcomes across industries.

2.1 Patterns of value creation

Meaningful convergence begins with a value gap, whether it is a constraint that limits performance or an opportunity created by new capabilities. Technology combinations are the enabling move. Sometimes they arrive as an integrated bundle, but more often they form through incremental layering inside enterprise operating environments, as organizations add complementary technologies over time until a new capability becomes material. At that point, the relevant question is no longer which technologies are present, but what new capabilities they set in motion as they scale.

The research followed five technology combinations across five diverse industries to observe what changes as they scale. Despite the differences between sectors, a consistent set of mechanisms occurred. Bottlenecks shift, often from singular constraints to hybrid digital-physical choke points. Integration becomes the limiting factor across teams, processes and ecosystems. Value expands, reconfiguring where advantage concentrates along the value chain. This yields a diagnostic that helps organizations assess what must be true for a combination to scale and where advantage is likely to concentrate if it does.

1. Shifting bottlenecks

Every economic system is shaped by its constraints, such as what is scarce, what feels risky, what is hard to coordinate or what takes time. These constraints shape where value accumulates and how competitive dynamics unfold. In practice, they often manifest in cost, talent availability and time. Businesses and markets organize themselves around these limitations, and strategies emerge to manage or exploit them.

When technologies enter a system, those constraints can shift, easing some bottlenecks but creating others in different parts of the process. This shifting becomes more noticeable when technologies are combined. When technologies build on one another, several limits can move at once and they tend to move faster. Each combination opens up new possibilities, which then become inputs for further innovation, creating a chain reaction of progress.

For example, battery performance was historically constrained by the use of heavy materials and inefficient chemistries. That bottleneck was relieved when advances in lightweight materials combined with improved battery chemistry, making lithium‑ion batteries practical. This shift unlocked an explosion of innovation in small devices, from smartphones to wearables, because designers could suddenly rely on compact energy-dense power sources that enabled new product categories. As the old constraints fell away, new ones emerged, including challenges in thermal management, charging speed and critical material supply chains.

Understanding constraint dynamics is critical for opportunity assessment because it predicts which technologies will scale or stall. Technology scales when it removes a key bottleneck without creating a new problem that is just as limiting. In the case of combinatorial technologies, this is often about digital and physical integrations, and managing their implications across people, processes and partners. If these orchestrations prove just as limiting as previous constraints, scaling becomes less likely.

Considerations

- Which constraints define my industry today?

- How could emerging technologies reshape these constraints?

- If a new technology combination becomes feasible, what new challenges could emerge, and how severe might they become under different scenarios?

2. Integrating technologies

Successful adoption increasingly depends on how well a company integrates the technology across its people, processes and ecosystem. Unlike single technologies, technology combinations create change all at once, cutting across old and new systems, spanning multiple teams and demanding diverse skills. Companies can’t modernize end-to-end chronologically but instead layer new coordination tools on to existing assets, processes and governance, adapting workflows where new capabilities change system logic.

The smartphone illustrates this. The problem it addressed was fragmented mobile capabilities: computing, communication, navigation and media each required a separate device, each with its own interface, data plan and charging cable. Solving that problem meant combining technologies from industries that had never coordinated before. The smartphone didn’t just plug into new systems – it had to run on top of existing telecom networks while layering in new capabilities such as camera support, app ecosystems and new screen technologies. This shift forced an industry-wide change. Network operators had to change how they managed capacity and priced data. Phone makers had to add software teams to hardware-led organizations. New roles, such as app developers, emerged, and existing teams had to coordinate with them. The device was widely adopted not only because of its features, but also because companies orchestrated the systems around it.

Considerations

- How can the technology be adapted to better fit the organization?

- How could the organization evolve to take full advantage of the technology?

- What new skills, roles or partnerships are required to deliver the technology combination?

3. Expanding value

As combinatorial technologies solve problems that individual technologies could not, they do more than shift where value accumulates – they increase the total value available to the market. This expansion can occur in different ways, including higher production or throughput of services and improved accuracy, resource allocation and workforce efficiencies, all of which increase the total value the market can realize.

In this shift, companies that build and supply convergent technologies tend to broaden their participation in the market, while the organizations that adopt and apply these technologies gain access to capabilities that continuously improve, strengthening their position. This pattern often emerges as vertically integrated offerings mature and begin to rely on partnerships. For example, in e-commerce, the shift to external logistics providers allowed sellers to access a wider customer base, while delivery companies captured a share of the value created.

One consequence of this shift is that value moves from owning and managing all capabilities to orchestrating capabilities between the organizations supplying these technologies and the organizations adopting them. As a result, scale and long-term advantage depend less on control and more on how effectively an organization connects technologies, operations and partners into a dependable system. Section 3 examines the theme of orchestration in more detail.

Considerations

- Does the value created by the technology clearly outweigh the cost and effort required to implement it?

- What does partnering for this capability allow the organization to achieve that it could not do as effectively on its own?

2.2 Signals from the field

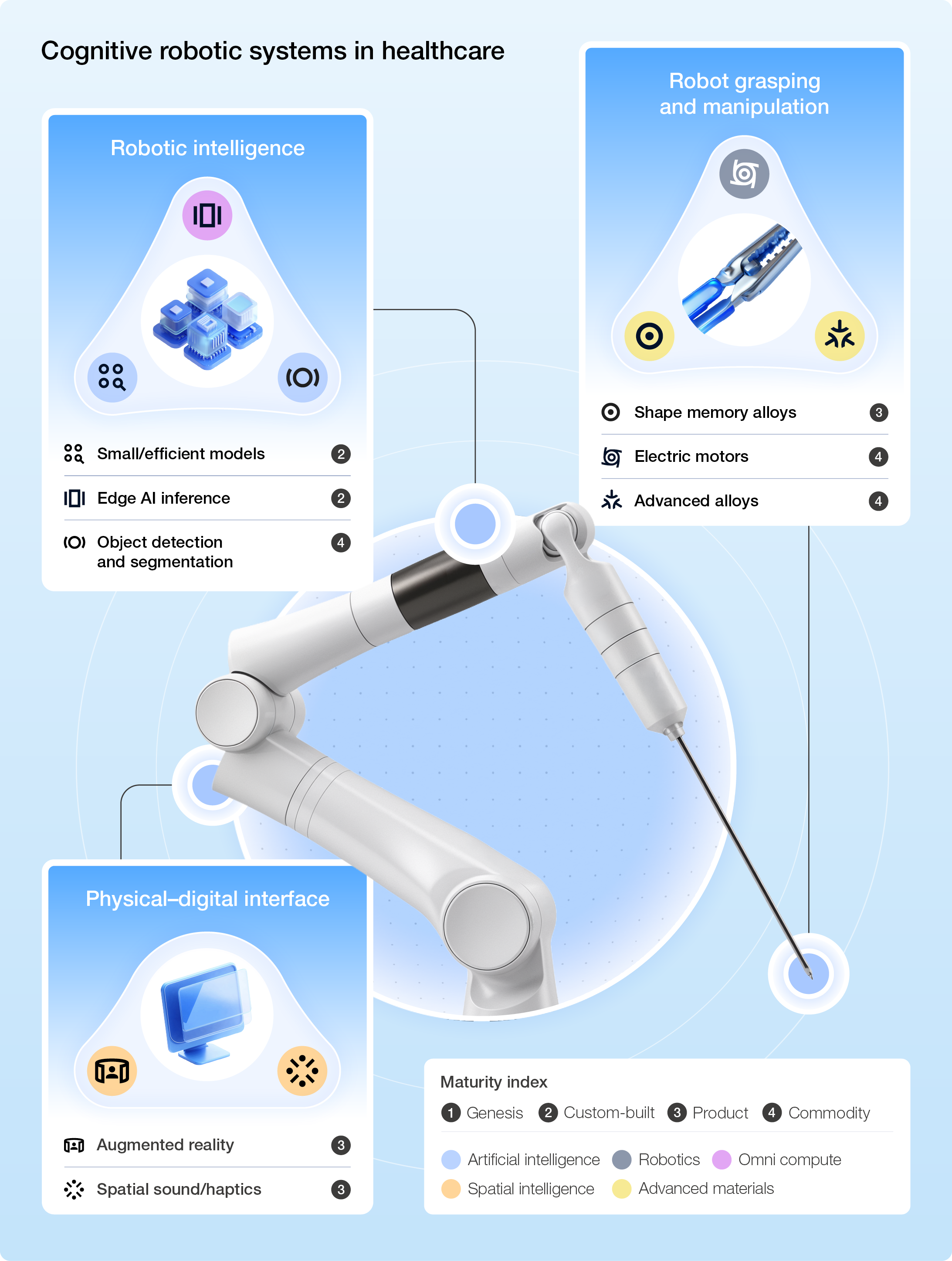

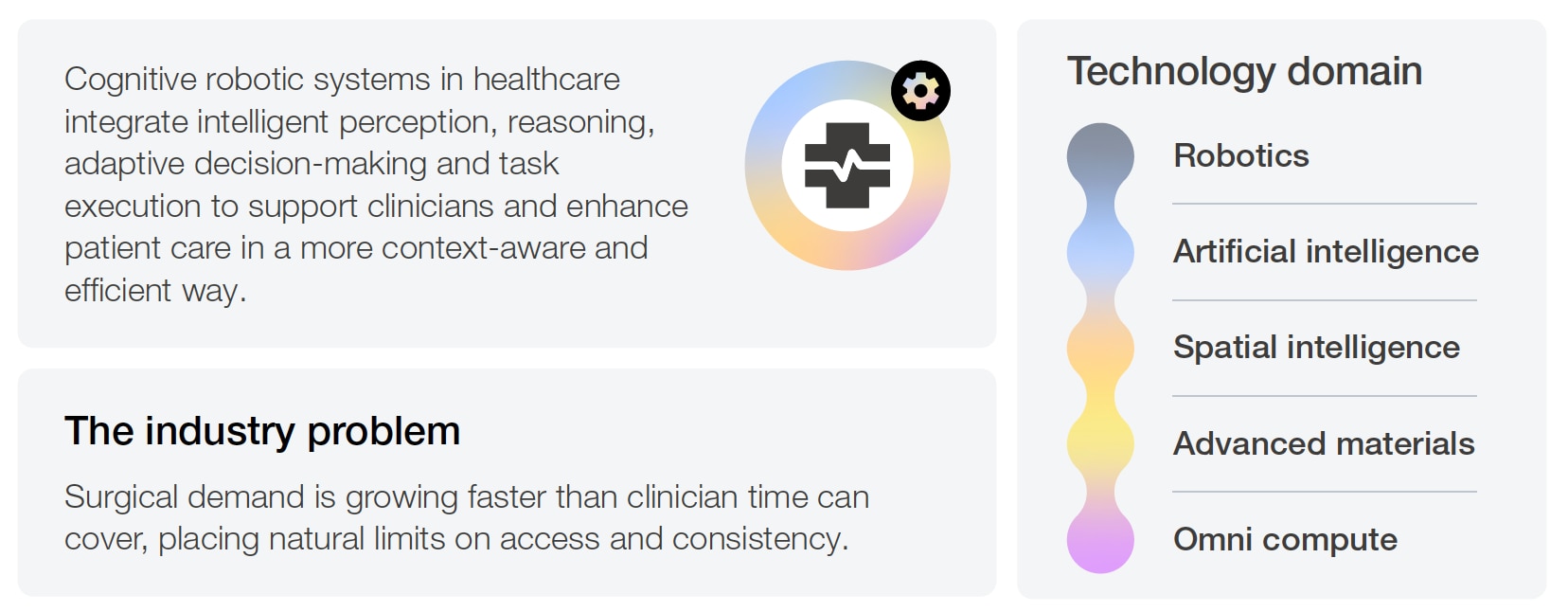

Cognitive robotic systems in healthcare

Why combination is possible today

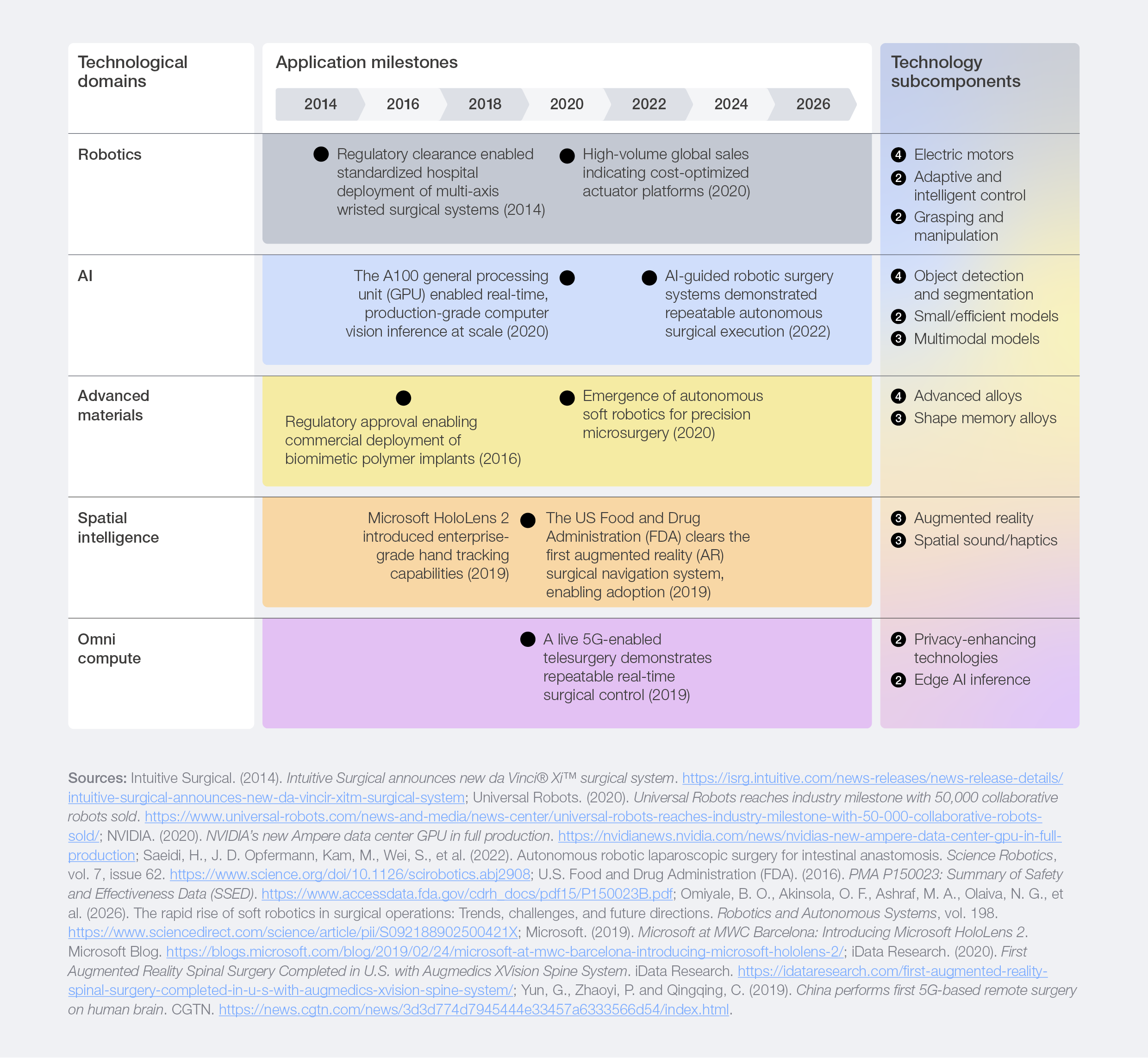

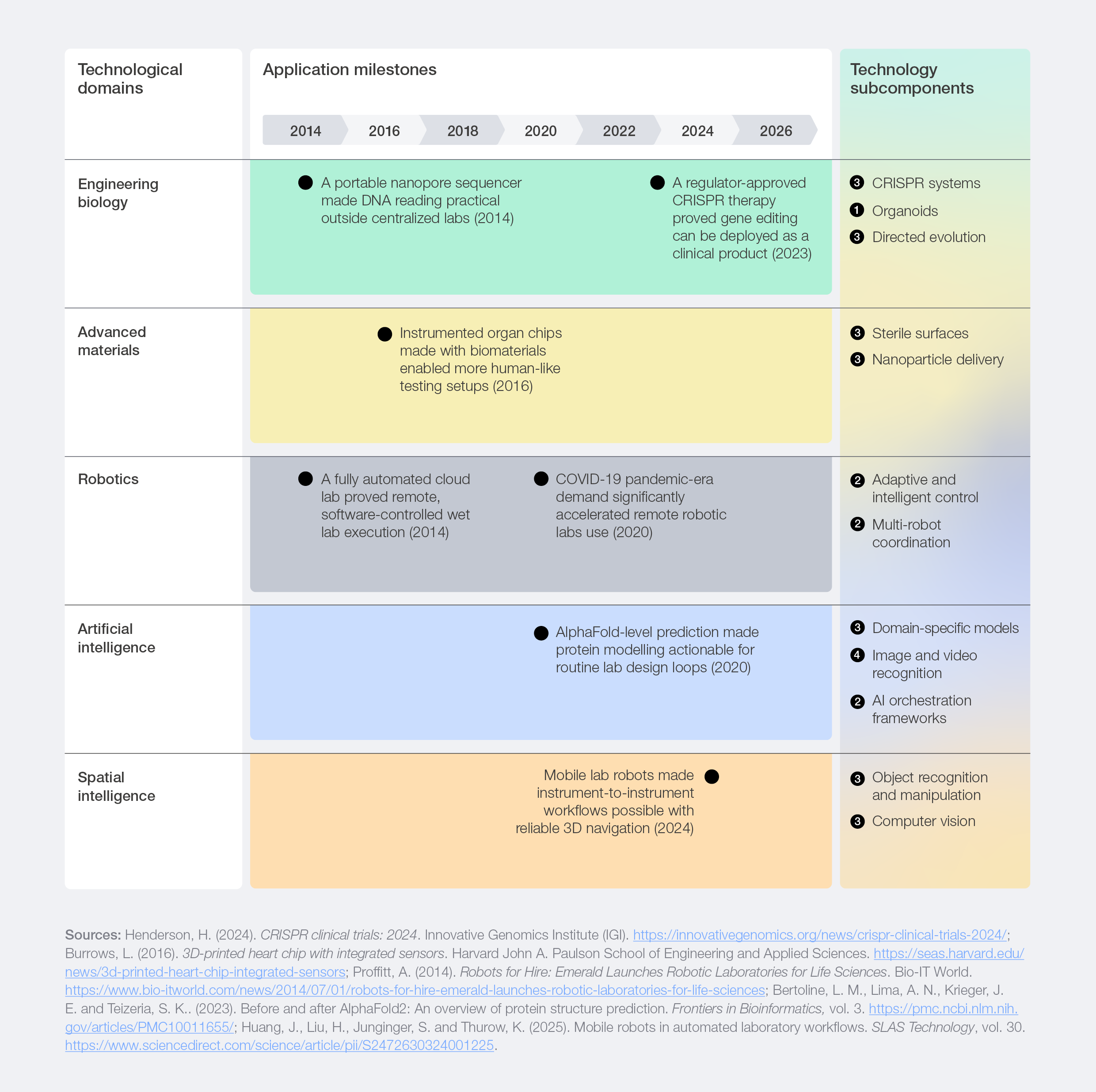

Historically, surgical robots were costly and bulky, used mostly in specialist centres, while overall adoption stayed under 20% of procedures in the US,2 with even lower rates globally. In recent years, progress that once unfolded in separate technological domains has increasingly begun to converge, as shown in Figure 4.

Robots became more affordable and capable while new materials enabled smaller and safer instruments, allowing precise manipulation even in confined spaces. At the same time, artificial intelligence (AI) and spatial computing provided a richer understanding of the operative field by identifying anatomy, tracking instruments, flagging anomalies and revealing subsurface structures before the first incision. These improvements were supported by expanding omni compute capacity that made real-time guidance – and even telesurgery – technically feasible where infrastructure allowed. Taken together, the combined progress across these domains signalled a shift from isolated innovations towards integrated and intelligent surgical solutions.

Figure 4: Subcomponents propelling cognitive robotic systems to their current maturity

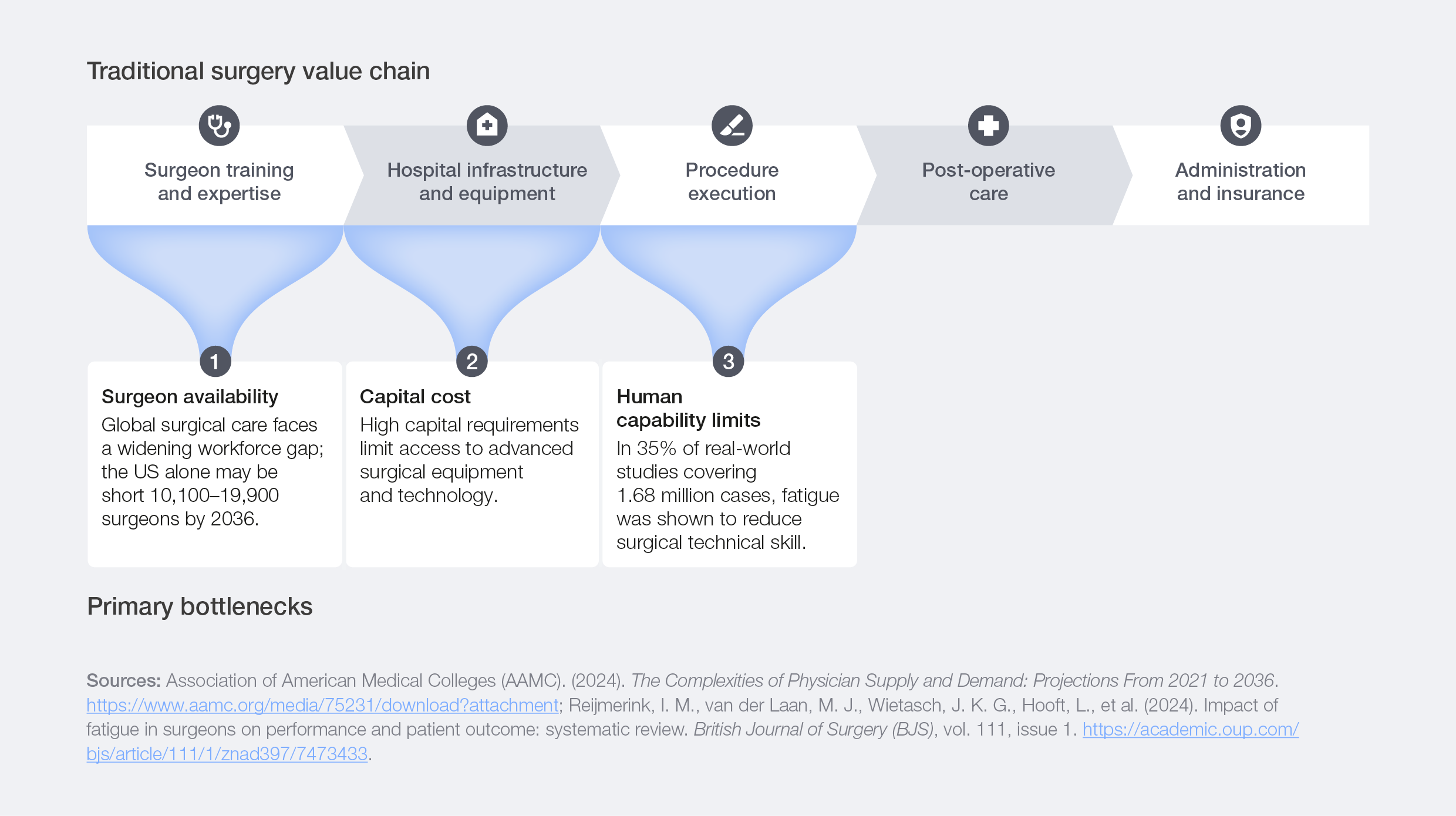

Shifting bottlenecks

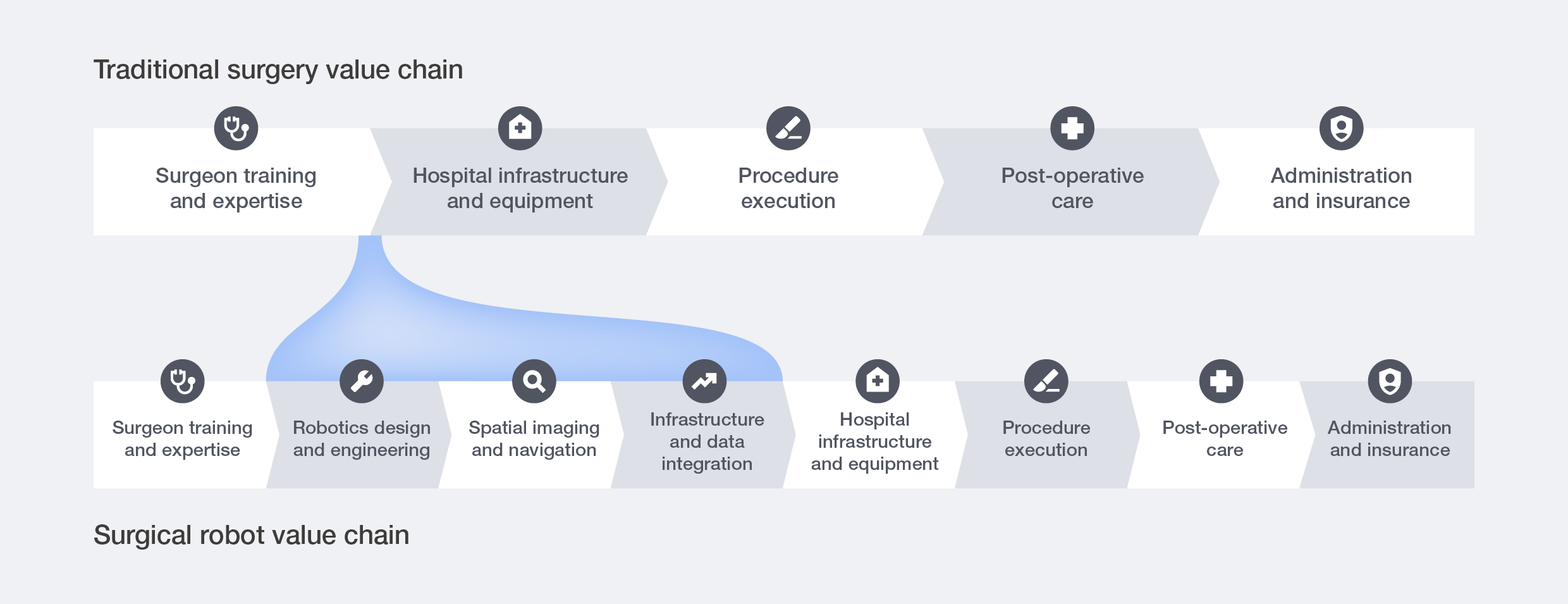

The traditional surgery value chain is organized around time as the primary constraint. Surgeons’ training and skill accounted for a disproportionate share of value creation, evidenced by the fact that high-volume surgeons deliver significantly better outcomes in 74% of studies, and specialist surgeons outperform generalists in 91% of cases.3 However, skilled surgeons are rare, their training requires a decade or more, and their working capacity is limited by human endurance. Hospital design, staffing models, scheduling systems and reimbursement structures are all organized around these scarcities.

Figure 5: Traditional surgery value chain constraints

The development and adoption of surgical robots aim to overcome these bottlenecks:

- Surgeon availability: Robots support teleoperation and automation, allowing surgeons to work across multiple locations, spend less time physically present and complete procedures more efficiently.

- Capital cost: Many surgical robots adopt a robot-as-a-service (RaaS) business model, eliminating the need for large upfront investments in advanced systems. High robot use enables high-volume, high-precision procedures, reducing long-term costs per surgery.

- Human error limits: Robotic systems support precision, stability and endurance, performing tasks that extend human physicality.

Surgical robotics’ performance increasingly hinges on resolving technology access barriers, building trust in robotic systems and achieving seamless workflow integration.

Figure 6: Adoption of surgical robotics reshapes the traditional surgery value chain

Integrating technologies

Case study 1: CMR Surgical designed its surgical robots to fit within existing processes

The problem: Robotic systems are traditionally difficult to integrate into highly customized hospital settings due to multiple surgical specialities and limited time for ancillary training and activities.

The action: CMR Surgical designed its Versius system to work within existing operating rooms and established surgical routines. The system’s modular design, with individual arm units that can be positioned flexibly, adapts to different room layouts rather than requiring facilities to reconfigure around the robot.

The outcome: Surgeons keep their usual team setup, move easily between robotic and traditional procedures and communicate face-to-face during operations. This avoids the need for surgeon retraining and significant behavioural change, while preserving workflow continuity. CMR Surgical’s experience illustrates how a combinatorial system gains traction not by forcing change, but by strengthening the ways people already work.

Expanding value

The introduction of surgical robots helps hospitals perform more procedures and achieve higher accuracy. Surgical robots do not reduce the value surgeons create; instead, they use surgeons’ time more effectively by allowing them to focus on decisions that require human judgement, supporting them for highly dexterous tasks and, in some cases, allowing them to carry out procedures remotely, dramatically lowering the potential travel time required. Hospitals will continue to face a strategic choice about whether to develop these capabilities internally or to coordinate parts of the new value chain with external partners and suppliers.

Digital twin ecosystems in advanced manufacturing

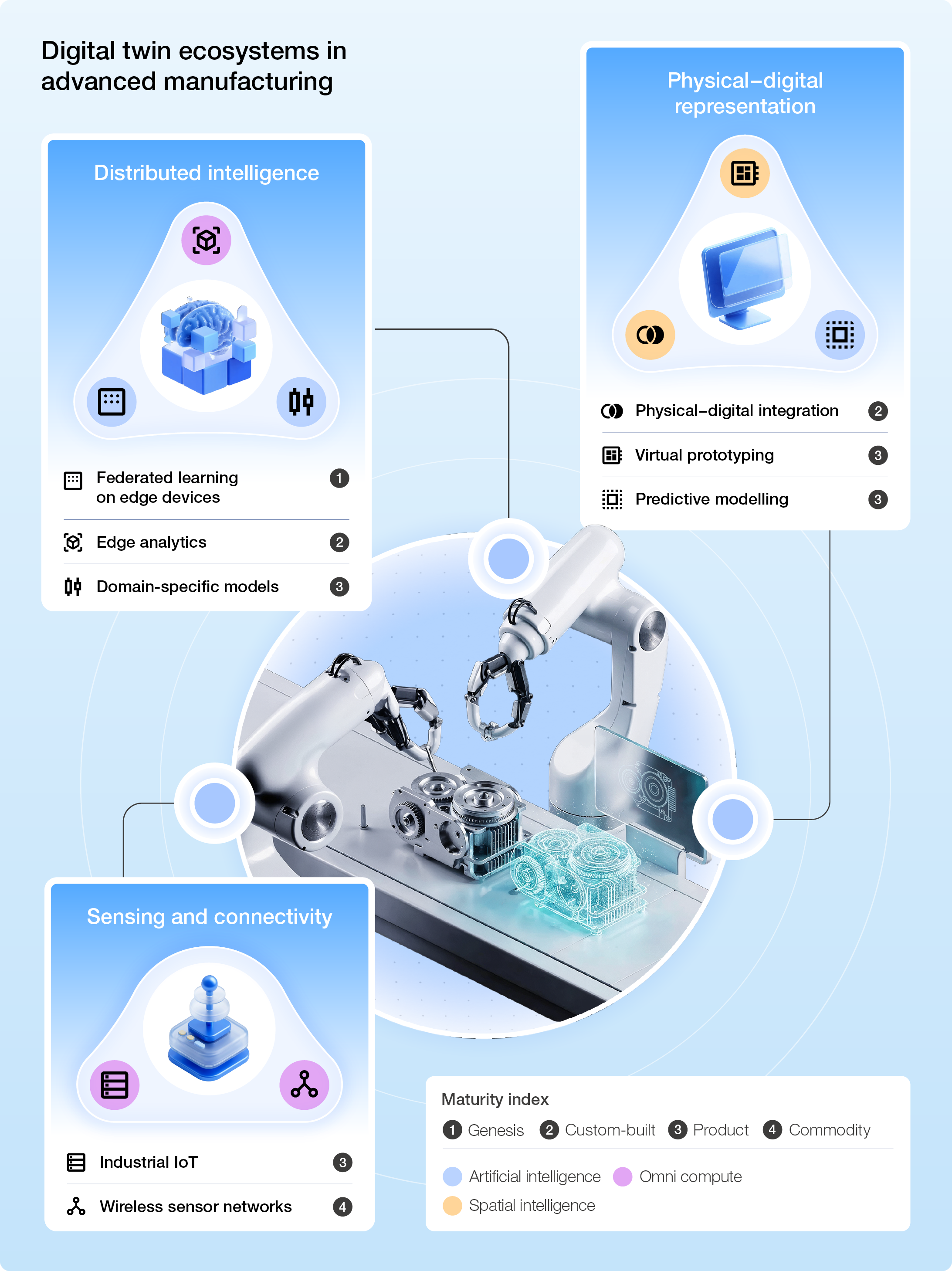

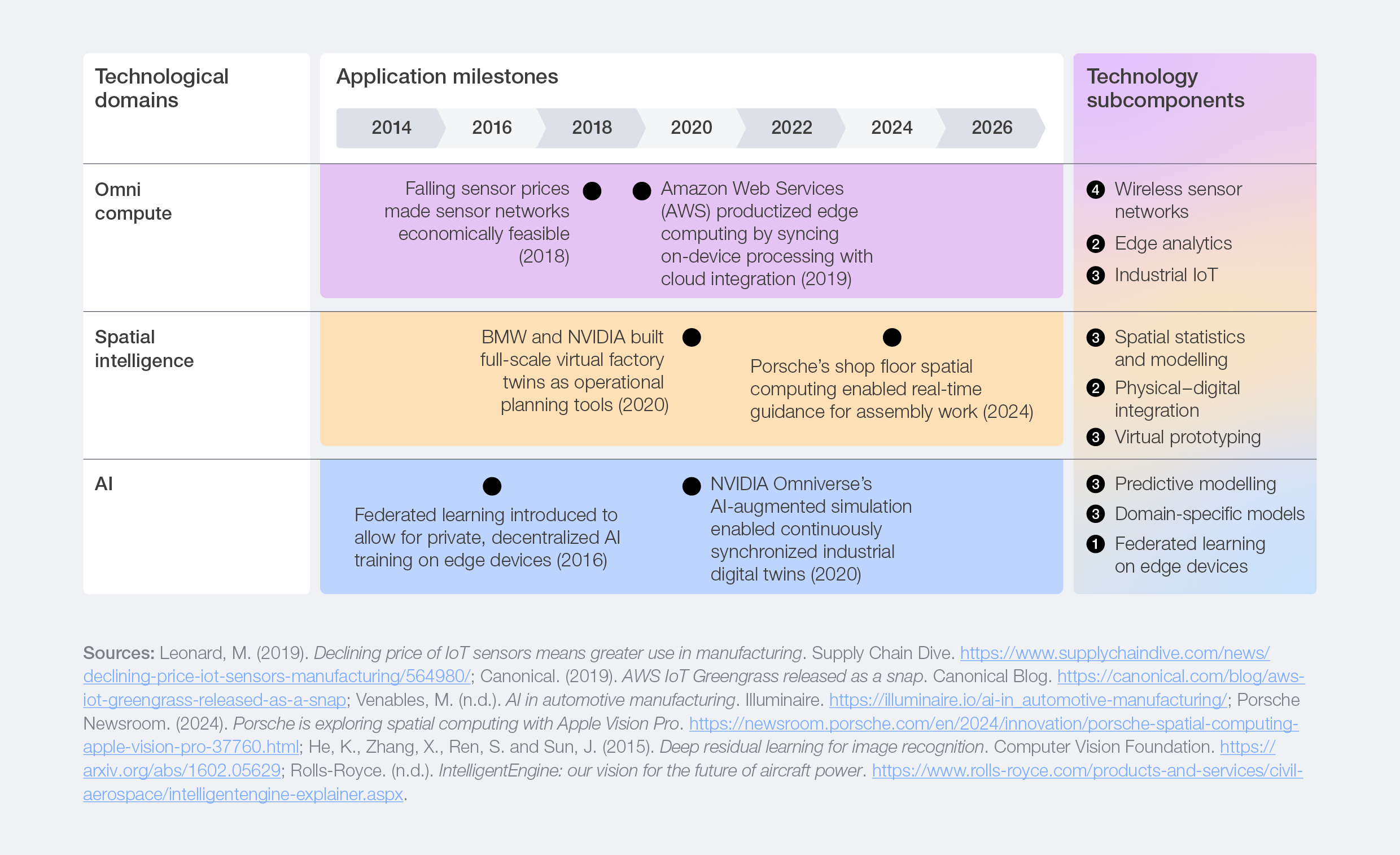

Why combination is possible today

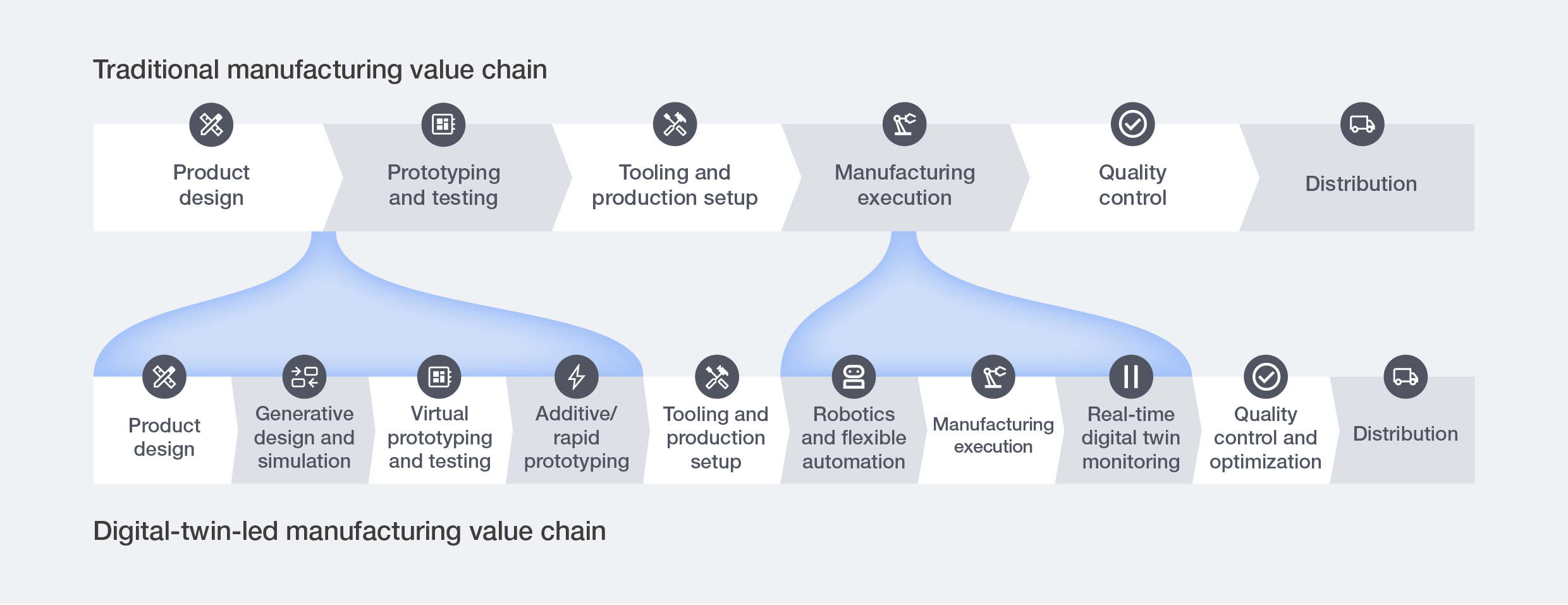

Earlier digital twins were limited by fragmented data, immature spatial models and experimental AI, limiting them to basic visualization and planning rather than cohesive operational systems. Today, multiple capabilities are advancing in parallel and beginning to converge, as shown in Figure 7.

Advances in omni compute deliver dense, real‑time production data, giving twins a live view of what is happening on the floor. Improvements in spatial intelligence are then layered on rich, interactive context, making these models intuitive for operators, engineers and planners to engage with directly. At the same time, rapid advances in AI unlocked the ability to simulate thousands of scenarios, learn from real‑world conditions and automatically optimize design and process decisions. Together, these advancements have transformed digital twins from passive models into fluid, real‑time systems that actively guide and improve manufacturing operations.

Figure 7: Subcomponents propelling digital twin ecosystems to their current maturity

Shifting bottlenecks

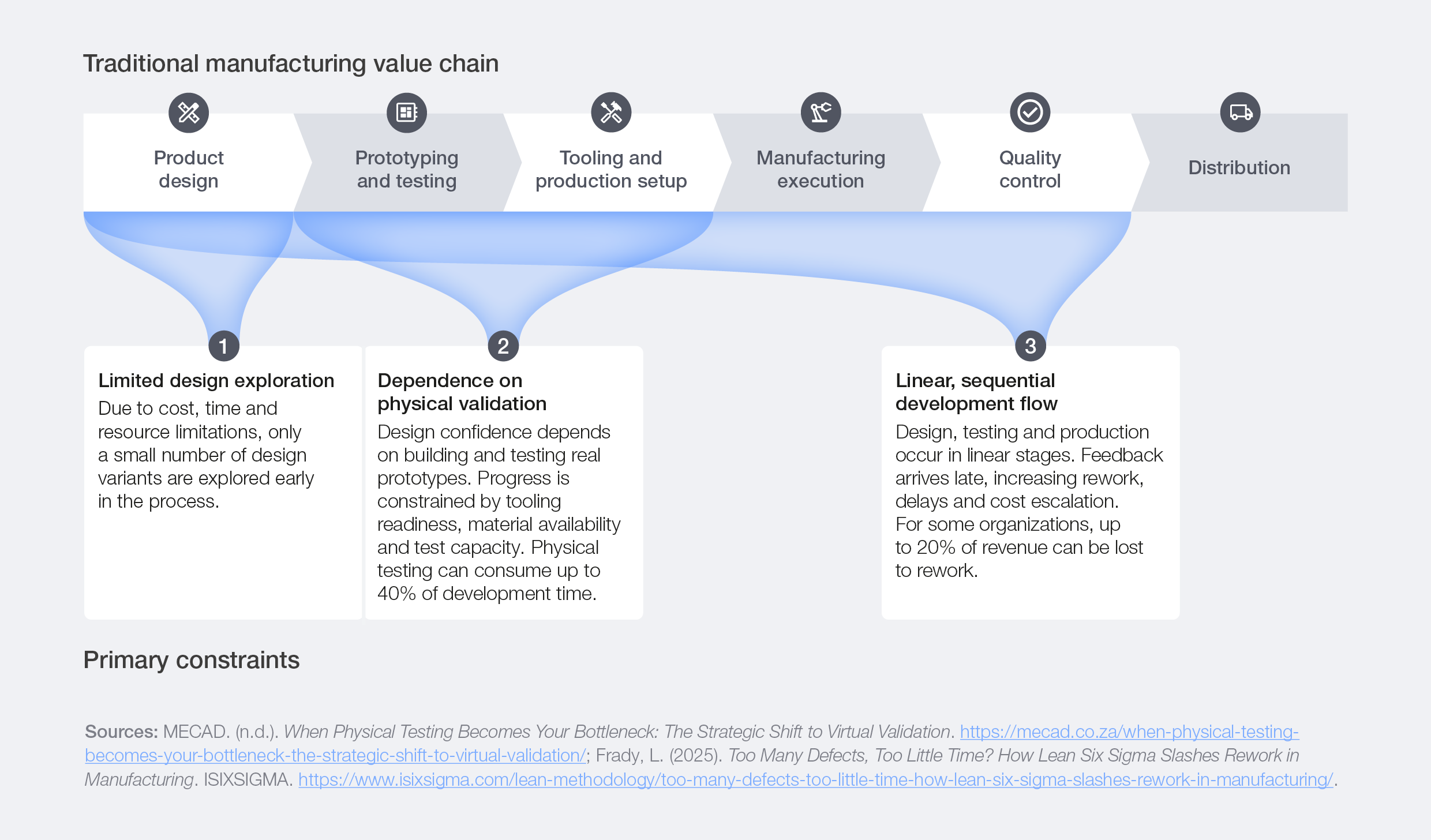

In manufacturing, value creation has long been constrained by the cost and speed of physical iteration. Product design, testing and production have historically progressed through linear, hardware-intensive stages, resulting in expensive prototyping, lengthy testing cycles and repeated rework.

Figure 8: Traditional manufacturing value chain constraints

The development and adoption of digital twin ecosystems aim to overcome these constraints:

- Limited design exploration: Generative design and high‑fidelity simulation enable thousands of digital variants before hardware is involved, expanding the design space without proportional cost or time.

- Dependence on physical validation: Multi‑physics models, continuously calibrated with sensor and operational data, build confidence early. Physical tests shift from discovery to targeted confirmation.

- Linear, sequential development flow: Digital twins connect design, testing and production through shared models and real-time data. Linear handoffs are replaced by iterative, parallel workflows that shorten cycles and reduce late-stage risk.

Figure 9: Adoption of digital twins reshapes the traditional manufacturing value chain

Digital-twin-led manufacturing performance largely depends on the fidelity of the simulation model, tight digital‑physical integration and the organization’s capacity to adapt talent.

Integrating technologies

Case study 2: Siemens orchestrated scalable industrial ecosystems for a connected digital twin future

The problem: Digital twins require multiple industrial tools to work together, but most industrial environments use siloed software and custom point-to-point integrations. The result is inconsistent data, redundant workflows and digital twins that are costly and inefficient to update or scale.

The action: Siemens made interoperability the foundation of its digital twin execution. Its digital business platform Xcelerator offers a curated portfolio of modularized interoperable software and hardware with standardized interfaces, certification frameworks and streamlined integration pathways. This ensures that shared data structures, spanning computer-aided design (CAD) models, automation logic, equipment telemetry and life cycle management tools, are already embedded across the Siemens ecosystem. As a result, different industrial software packages, from simulation engines to manufacturing execution systems (MESs) and product life cycle management (PLM) systems, can plug into the same environment without rebuilding integrations, and updates in one tool flow reliably across the entire digital twin.

The outcome: Digital twins become tightly integrated, continuously synchronized systems. Data remains consistent across the life cycle, industrial software works together without friction and integration costs drop. Customers and partners can customize and scale digital twin solutions faster and with greater reliability, unlocking higher operational value across the ecosystem. For example, with its shop‑floor material‑flow digital twin, Siemens simulated the movement of automated guided vehicles to optimize routes and cycle times, reducing material circulation by about 40% and boosting overall efficiency.

Case study 3: Gecko Robotics helps manufacturers generate high-quality physical data that strengthens simulation model fidelity

The problem: Manufacturers increasingly rely on digital twin ecosystems to accelerate design and testing, but often lack the physical data needed to calibrate and validate simulation models.

The action: Manufacturers partner with physical data providers like Gecko Robotics, which deploys robotic inspection systems equipped with advanced sensors and edge AI, purpose-built for extreme industrial environments to collect high-fidelity data on corrosion or structural integrity, which is directly integrated into clients’ digital twin systems. Gecko operates across a wide range of manufacturing clients and therefore benefits from scale and learning effects that improve data quality and consistency. When integrated into manufacturers’ digital twin workflows, this data directly enhances simulation accuracy and model reliability.

The outcome: Manufacturers remove a key challenge around digital twin performance, improving asset management, predictive maintenance and operational optimization without duplicating capital investment or building specialized capabilities.

Expanding value

Digital twins add new layers of insight that help manufacturers use equipment more effectively and skilled teams focus on high-priority tasks. They enable continuous monitoring, simulation and adjustment of production systems, increasing throughput and reducing downtime. This increases the overall revenue while also minimizing unexpected equipment costs. Manufacturers will continue to decide whether to develop digital twins internally or work with partners.

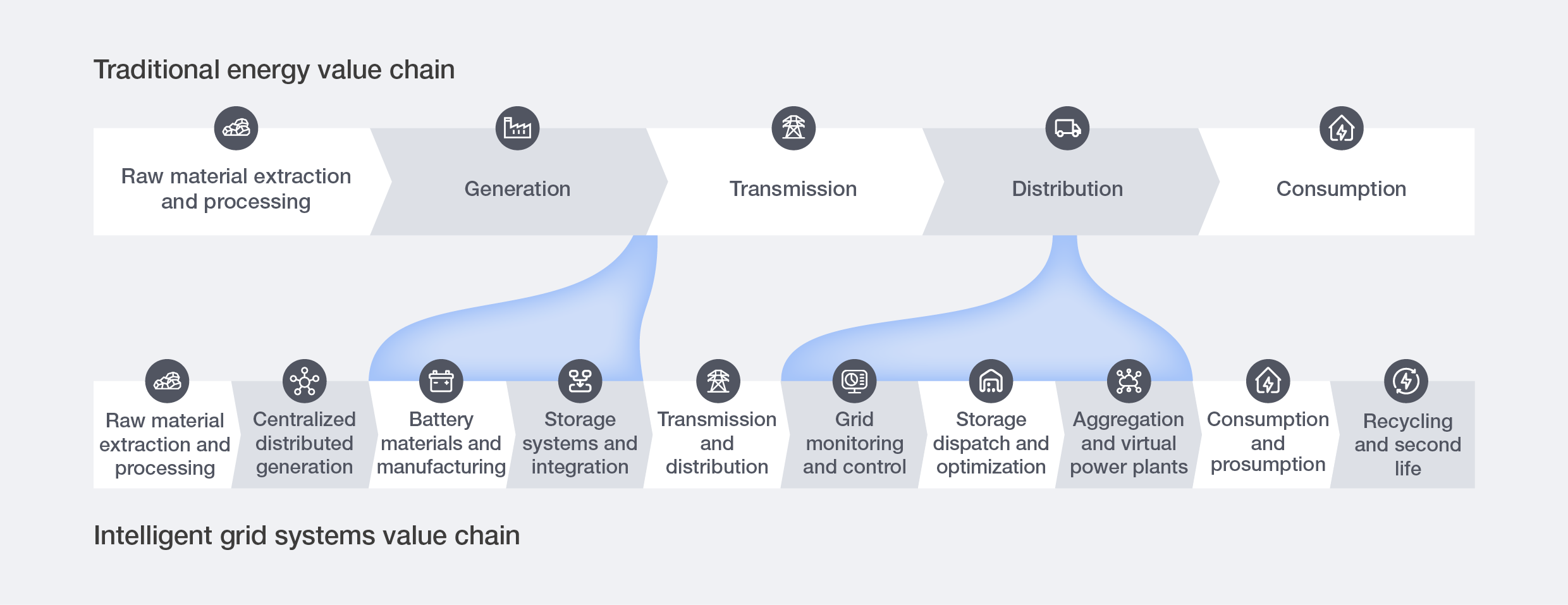

Intelligent grid systems in energy

Why combination is possible today

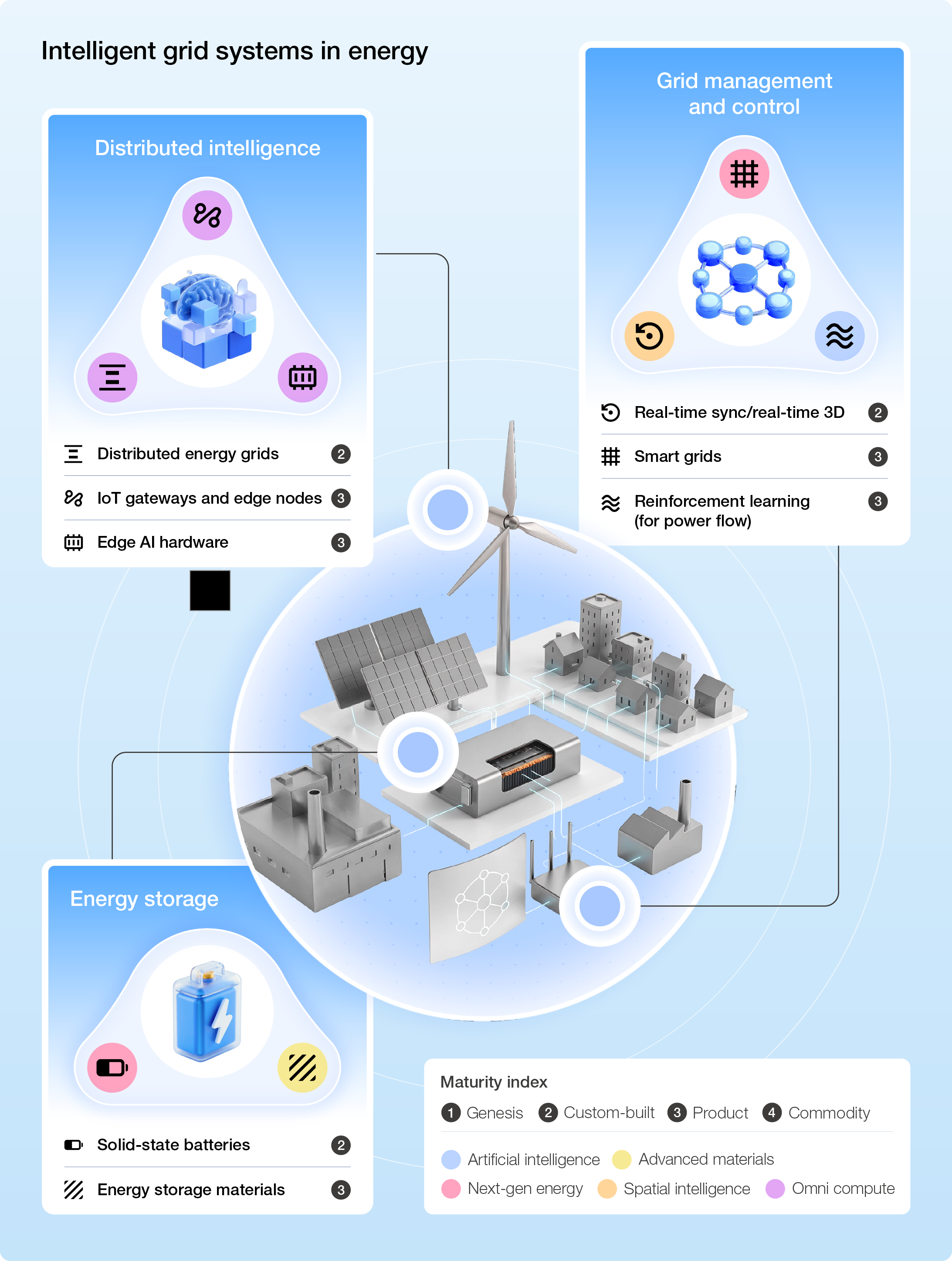

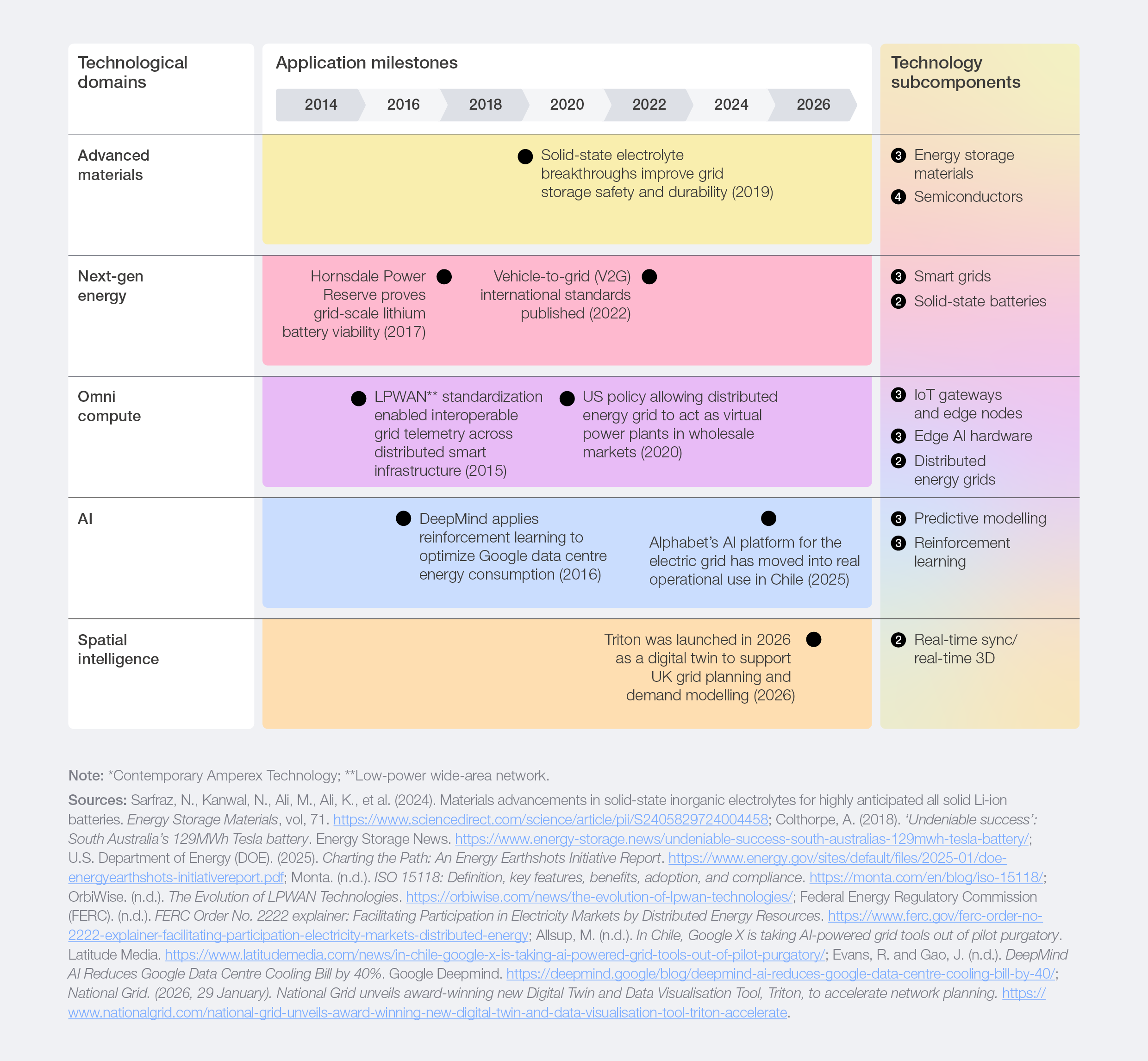

For years, batteries were too costly for commercial use, renewable generation lacked scale and grid infrastructure was built for centralized control. The landscape has shifted as a stack of technologies has matured together, as shown in Figure 10.

Today, advances in advanced materials and next‑gen energy have made grid‑scale storage both economically viable and operationally capable, allowing assets to absorb and inject power with stability and flexibility. Progress in omni compute and AI now gives operators real‑time visibility into grid conditions and the ability to optimize charge, discharge and power flows across multiple value streams. Spatial intelligence enables the simulation of infrastructure impacts and the strategic placement of assets to deliver the greatest system benefit. Together, these advances are shifting the grid from a reactive, centralized system into an adaptive, real‑time network that continuously balances energy across distributed resources.

Figure 10: Subcomponents propelling intelligent grid systems to their current maturity

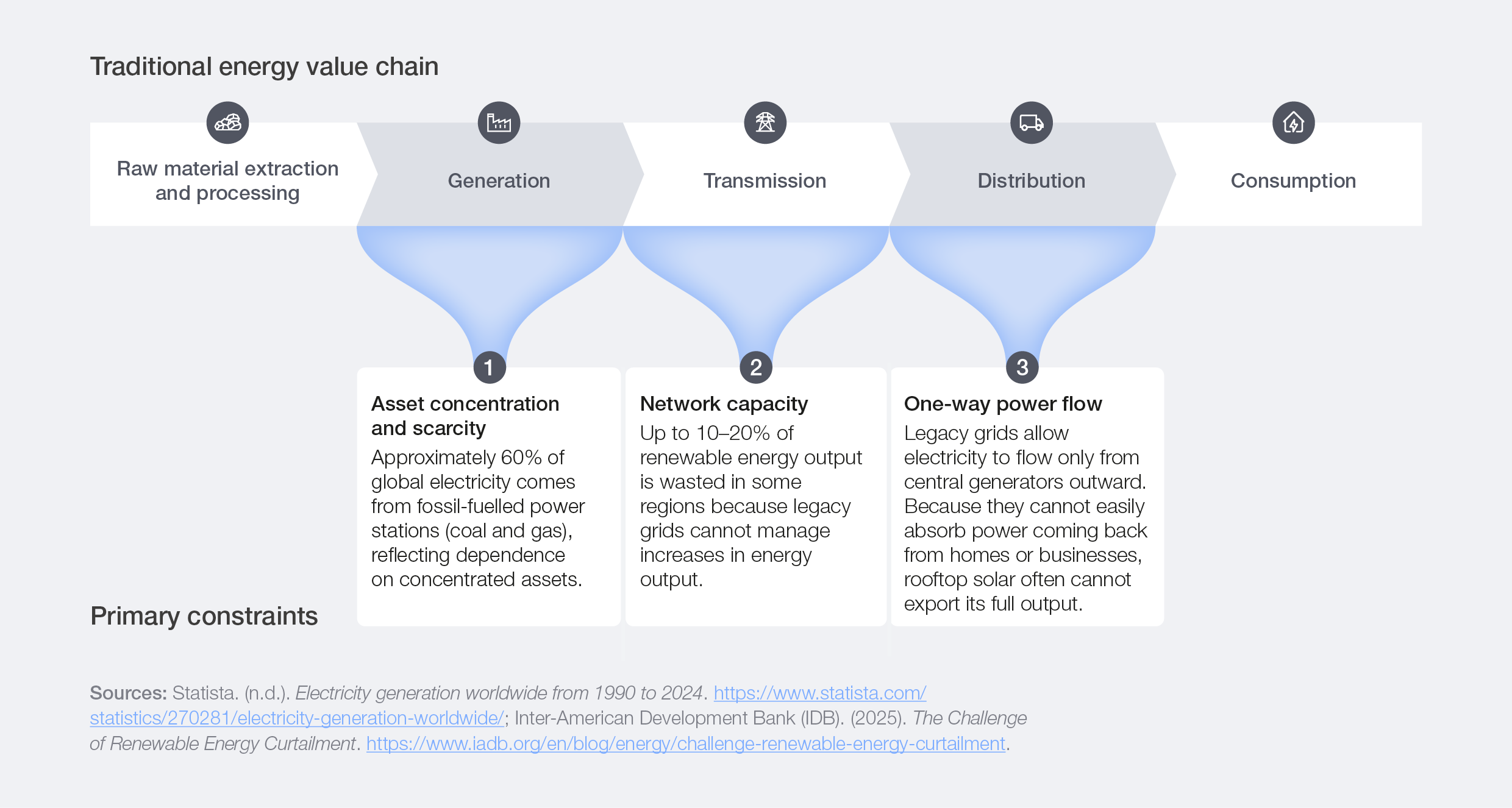

Shifting bottlenecks

In energy systems, value creation has long been constrained by centralized generation and linear power flows. Grid design and operating models evolved around large, scarce assets and predictable, one-directional demand.

Figure 11: Traditional energy value chain constraints

The development and adoption of intelligent grid systems aim to overcome these constraints:

- Asset concentration and scarcity: Advanced batteries, distributed generation and power electronics decentralize supply, breaking the reliance on a small number of centralized assets.

- Network capacity: IoT sensing, AI optimization and digital twins provide real-time visibility into grid conditions, enabling adaptive load balancing, predictive congestion management and more efficient use of existing infrastructure.

- One-way power flow: Intelligent control systems enable bidirectional power flows, integrating storage, electric vehicles (EVs), microgrids and flexible demand. Consumers increasingly become participants in grid balancing, rather than passive endpoints.

Intelligent grid performance increasingly depends on the duration of energy storage, the coordination of distributed assets across households, vehicles and storage systems, and the regulatory framework.

Figure 12: Adoption of intelligent grid reshapes the traditional energy value chain

Integrating technologies

Case study 4: Octopus Energy optimizes distributed assets to enhance grid flexibility and reduce costs for consumers

The problem: Costly and slow grid build-out is becoming a global policy priority to meet the growing peak demands of the electricity system, driven by intermittent energy generation and increasing electrification. Traditional energy systems were, however, not designed to manage this level of decentralized flexibility.

The action: Octopus Energy, a technology-led energy supplier, operates a software platform that coordinates distributed consumption and generation as a single system. It aggregates household assets, EVs, home batteries and solar panels, and coordinates when they consume, store or export electricity in a way that reduces costs for consumers and helps to reduce peak demand. This coordination is enabled through a hardware‑agnostic application programming interface (API) architecture. Octopus’s digital platform translates the proprietary languages of different EV chargers, heat pumps and batteries into a unified operational stream. This digital bridge allows Octopus to treat dispersed household equipment as a single virtual power plant. AI‑driven optimization then automatically dispatches power or triggers charging based on price signals, network constraints and customer preferences.

The outcome: Octopus now coordinates over 400,000 controllable assets in UK households, integrating more than 1.2 terawatt-hours (TWh) of distributed solar generation annually into grid and market operations.4 Research from the Centre for Net Zero found that using this technology for EV charging cut consumers’ bills by £343 per year on average and reduced peak household demand by 42%.5 It also shifted all EV charging to off‑peak times, easing pressure on the grid.

Expanding value

Intelligent grid systems help operators use generation, storage and distributed resources more effectively, reducing strain on infrastructure and improving the availability of energy. This enables the system to make better use of the resources already in place and deliver a more reliable supply. For organizations, competitive advantage comes less from owning generation assets or controlling fuel supplies, and more from orchestrating flexibility by coordinating storage, software, markets and distributed resources into systems that balance supply and demand across time and location.

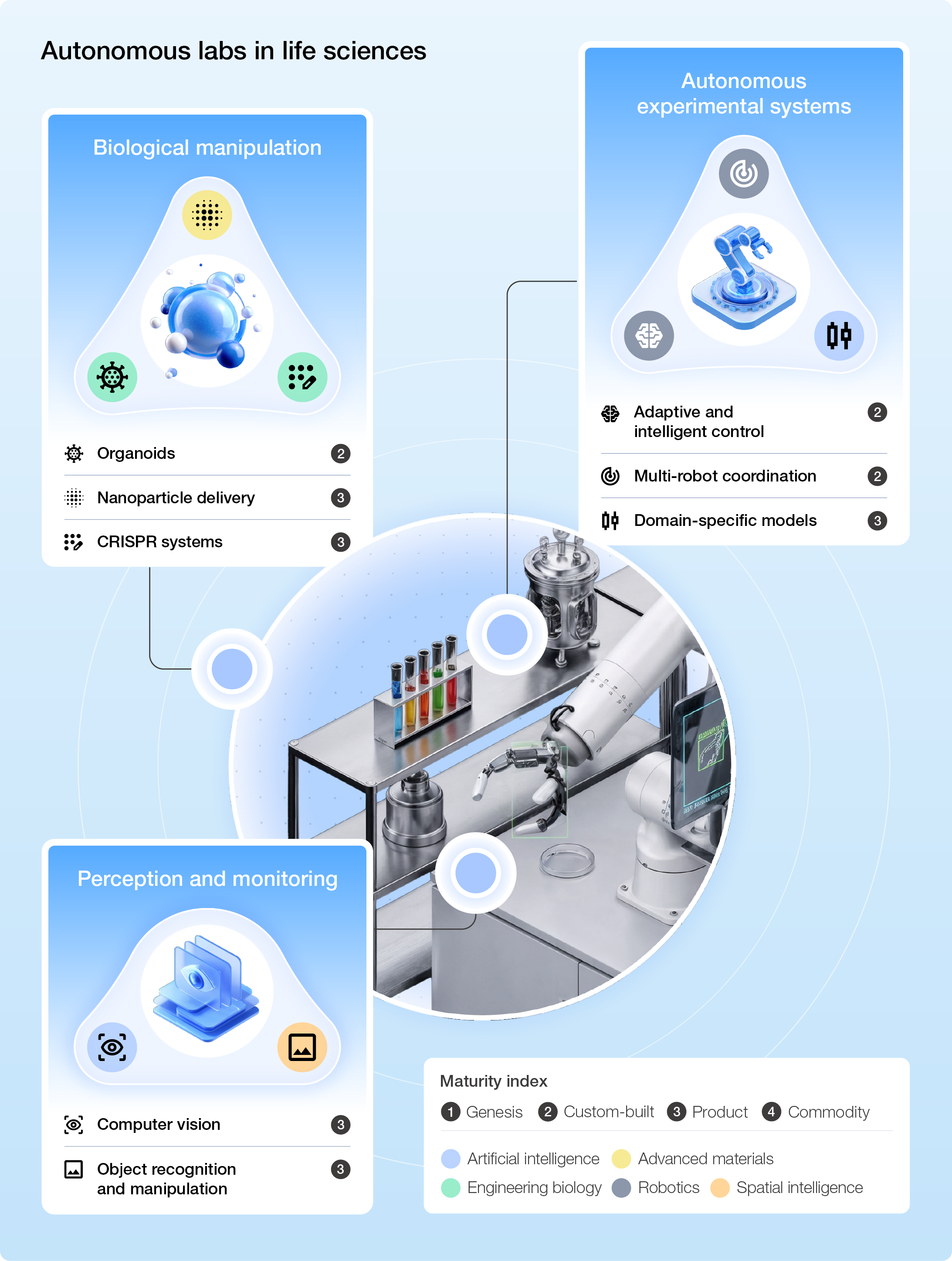

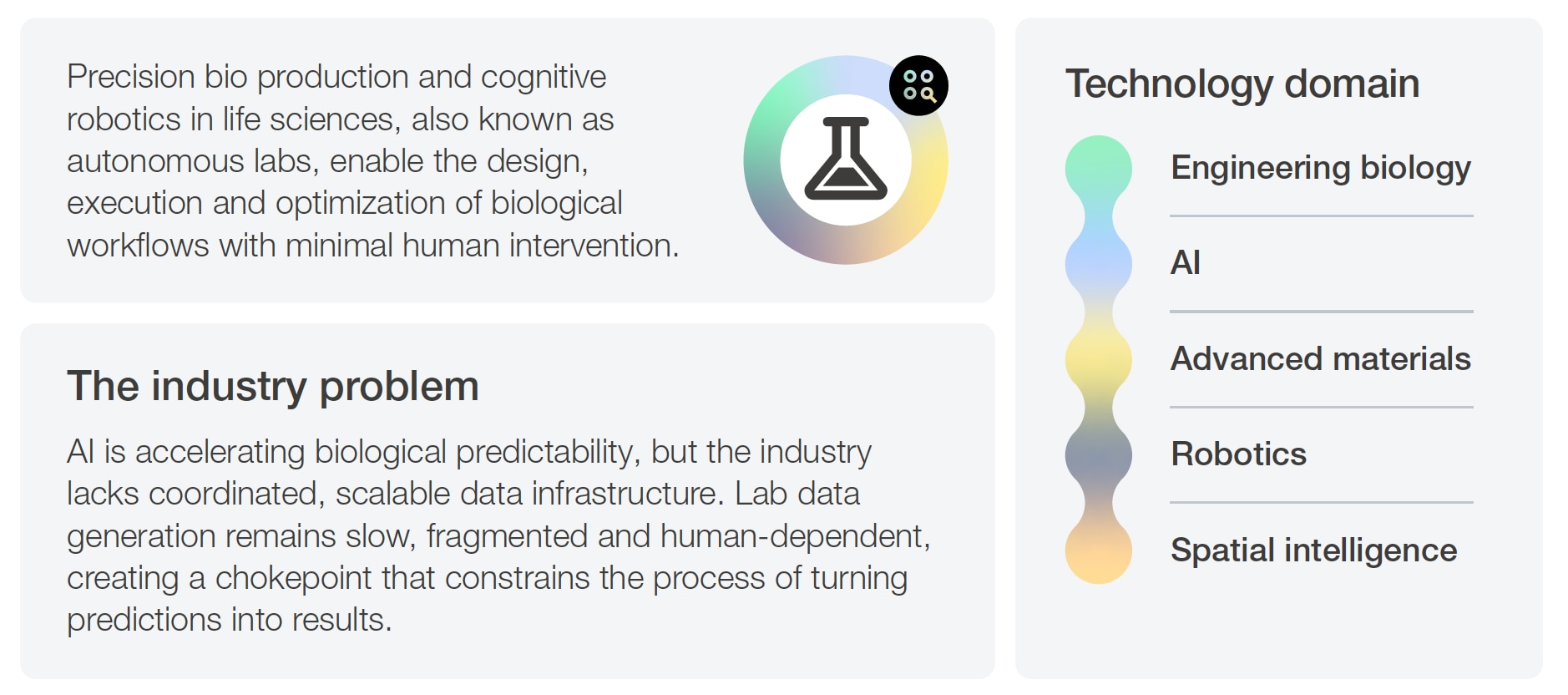

Autonomous labs in life sciences

Why combination is possible today

Autonomous labs were not viable in earlier years because the technical foundations were not yet in place. Biological systems were too variable, so automation was mostly limited to experiments requiring lots of repetition, such as screening. Today, a maturing stack of technologies is combining more broadly across lab environments, as shown in Figure 13.

Dense, real-time data from bio‑engineering processes now feeds continuously into digital models, while new materials enable the simulation of biological conditions and safely support diverse reactions and workflows. AI systems can then generate high-confidence, in‑silico designs before synthesis, which robotics can execute by performing production tasks and adjusting to simulation‑driven changes. Spatial intelligence further enables robots to operate effectively in retrofitted lab spaces rather than purpose‑built facilities.

These advances no longer sit in isolation. They can now be connected into coordinated, end-to-end workflows, enabling both greenfield autonomous labs designed around an AI-first technology stack and brownfield labs retrofitted to support increasingly automated operation. Together, these capabilities are transforming traditional laboratories into integrated, adaptive systems capable of increasingly autonomous operation.

Figure 13: Subcomponents propelling autonomous labs to their current maturity

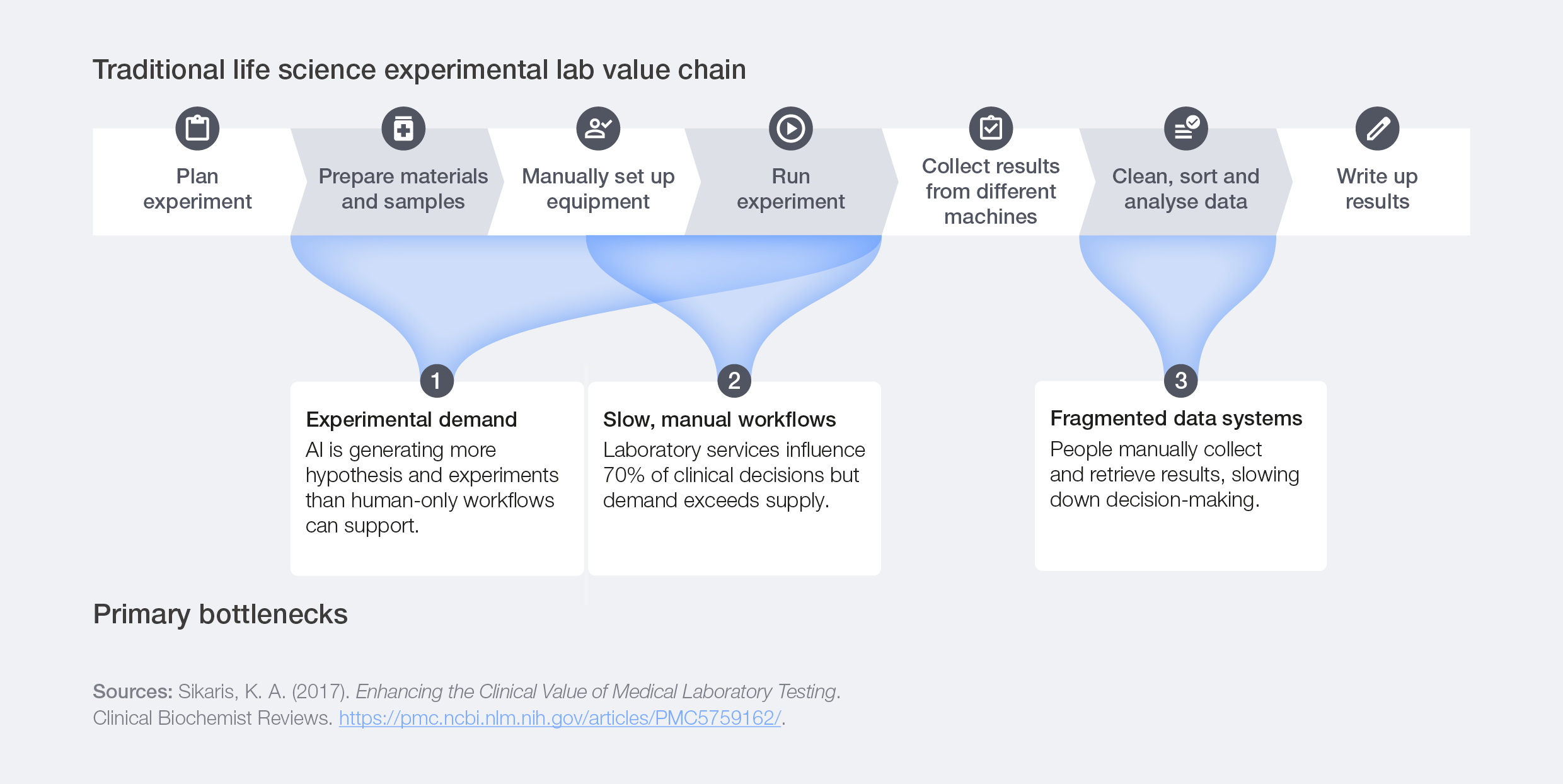

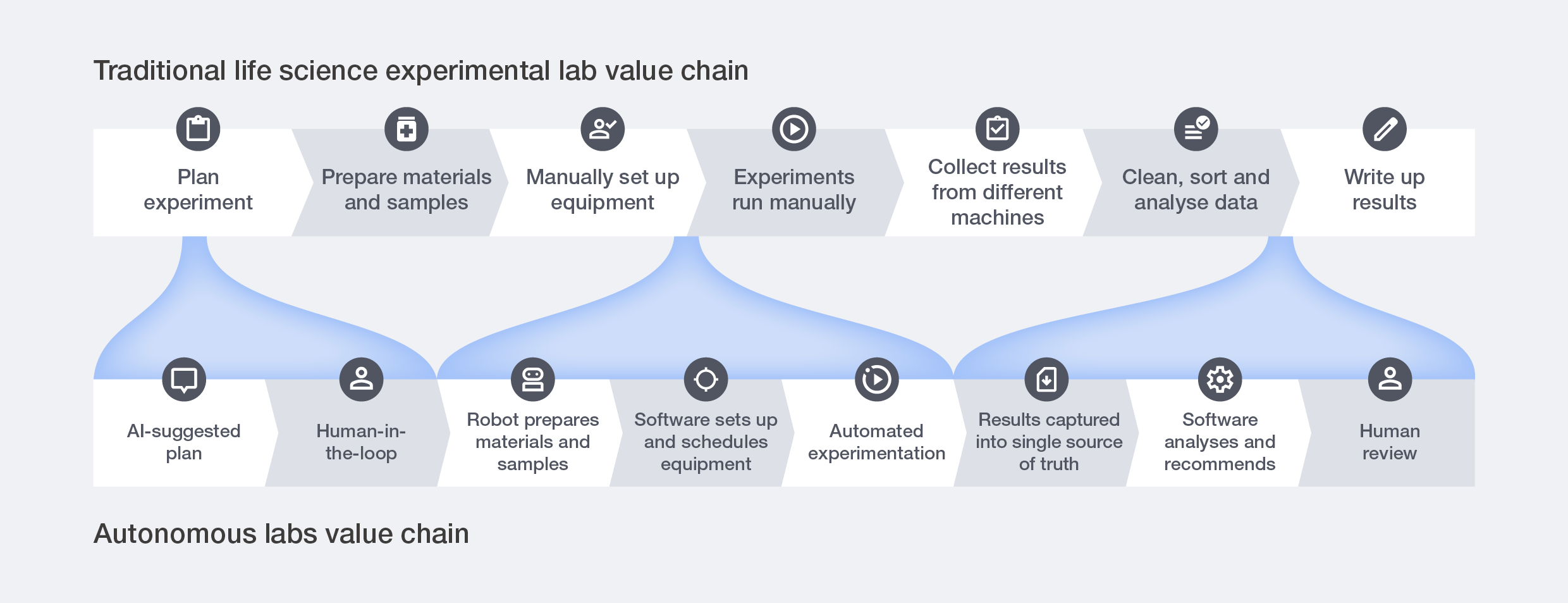

Shifting bottlenecks

Life sciences labs have made significant progress in digitization and automation, yet many workflows still depend on people to bridge gaps between systems. While tasks such as pipetting or plate handling have been automated for decades, these tools typically operate as stand‑alone instruments rather than as part of an integrated workflow. As a result, technicians often set up hardware and run instruments, but many steps remain manual, limiting throughput and contributing to reproducibility issues stemming from human-executed science.

Manual processes also introduce avoidable variation and delays. Even routine tasks can affect analytical accuracy, triggering additional investigations and cost down the line. Fragmented systems make this worse, requiring scientists to manually retrieve, clean and combine data, which further slows decision-making and reduces overall productivity.

Figure 14: Traditional life science experimental lab value chain constraints

The development and adoption of autonomous labs aim to overcome these constraints:

- Experimental demand: Autonomous systems take on routine tasks to expand scientific capacity, enabling smaller teams to direct large automated workflows and focus on higher-value analytical and clinical work.

- Slow, manual workflows: Robots can run many steps automatically and keep working through the day and night. This makes experiments faster and helps labs handle more work without needing more people.

- Fragmented data system: Today, results often come from different machines and must be collected and combined by hand. Autonomous labs automatically bring these data streams together. This reduces errors, speeds up reporting and gives scientists a clearer picture of what’s happening in real time.

Autonomous labs are reshaping traditional drug discovery value chains, increasingly relying on integrated digital and physical infrastructure, secure data systems and talent prepared to manage end‑to‑end automation.

Figure 15: Adoption of autonomous labs reshapes the traditional life science lab value chain

Integrating technologies

Case study 5: Deep Principle integrates autonomy to scale materials discovery

The problem: Advanced materials R&D is slow, expensive and constrained largely because experiments, data capture and analysis occur in fragmented, manual workflows. This limits the ability of AI models to iterate quickly and learn from experiments.

The action: Deep Principle redesigned how discovery work fits into existing labs, so adoption didn’t require a structural overhaul. They standardized experiment formats, data schemas and review steps so labs could connect their workflows into an automated discovery loop. Their partnership with XtalPi added flexible robotic modules that slot into current bench routines rather than replacing them. They also shifted customer payments away from large, upfront projects towards simple, use-based access and small proof-of-concept sprints to reduce adoption barriers.

The outcome: This integration-first model enabled autonomous experimentation in real lab environments, delivering up to 80% cost reductions in real projects while making it easy for teams to try the system, learn it and expand use naturally over time. By fitting into how labs already work and removing the operational burden of adoption, Deep Principle turned autonomous discovery into a scalable, self-reinforcing part of the R&D workflow.

Case study 6: SandboxAQ works with companies to adopt hybrid data strategies for speed, scale and precision in molecular discovery

The problem: AI‑driven molecular discovery relies on high‑fidelity physical data that captures real molecular behaviour. Producing ground‑truth physics data, such as quantum‑mechanical energies, forces and reaction pathways, requires hours or days of high‑performance computation per simulation, making full internal industrialization impractical.

The action: Leading firms adopt hybrid data strategies that pair their proprietary data with tailored, high-volume, computationally intensive data to drive model accuracy at scale. They retain internal control over the proprietary signals that drive differentiation while partnering with specialized providers such as SandboxAQ to externalize ground-truth physics data generation via validated large quantitative model (LQM) pipelines that combine physics-based simulation, cloud-scale computing and machine learning (ML).6

The outcome: Companies scale and accelerate AI-driven discovery with a strategic focus on core R&D priorities.

Expanding value

Autonomous lab systems help labs run experiments faster and with greater consistency by automating routine tasks and coordinating work across instruments. This expands scientific capacity, reduces variation and enables scientists to focus on the parts of research that need expert judgement.

Non-invasive brain-computer interfaces

Why combination is possible today

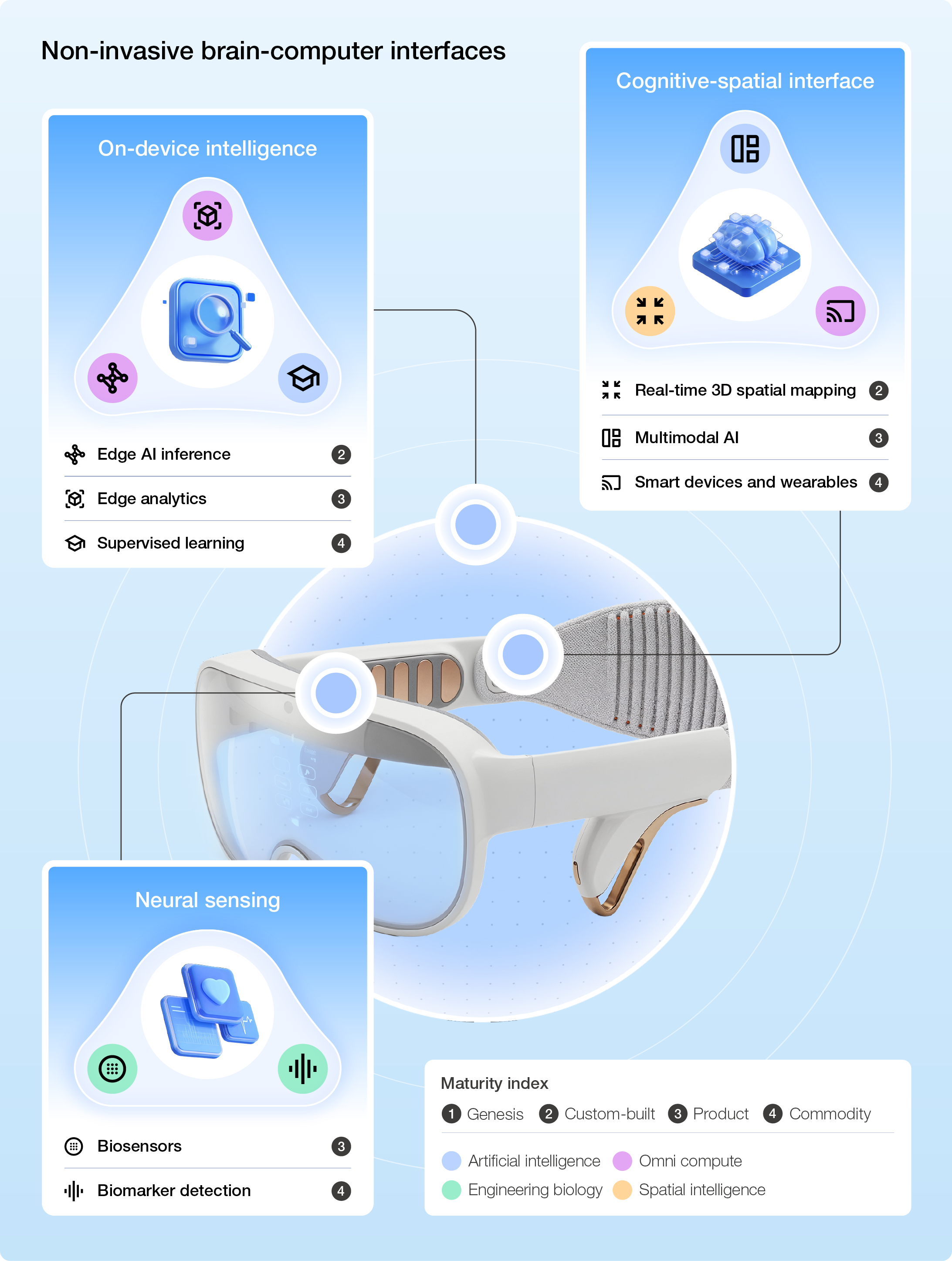

Wearable technology has expanded well beyond fitness trackers and smartwatches. Today’s devices monitor heart rate variability, blood oxygen, skin conductance, sleep architecture and movement patterns. Non-invasive brain-computer interfaces (BCIs) represent a distinct subset of this broader category: wearables designed specifically to measure and interact with brain signals. Where most wearables read peripheral physiological signals that infer health states, non-invasive BCIs can more accurately and directly measure attentional states, expanding the capacity for human-machine interaction.

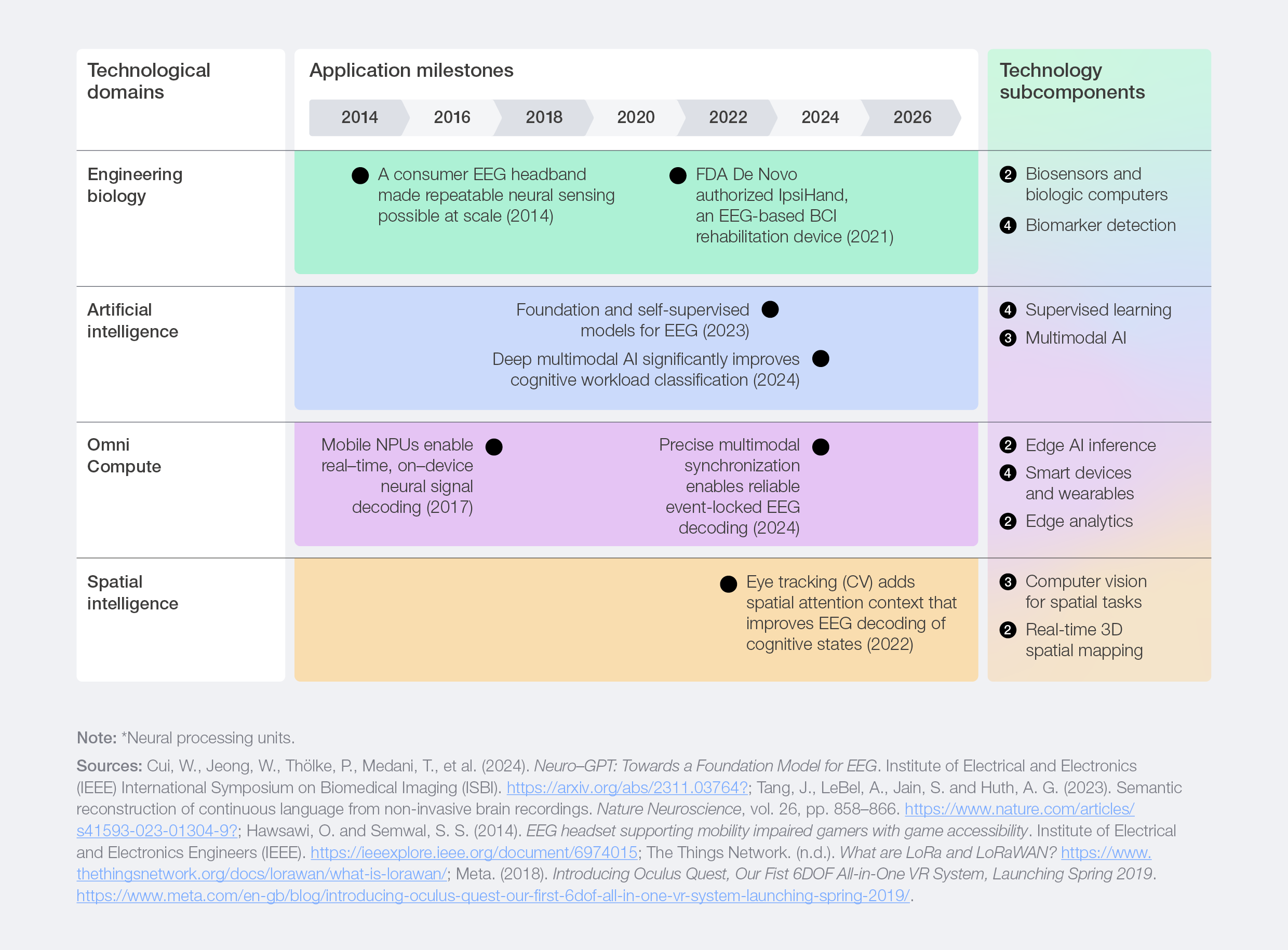

Five years ago, non-invasive BCIs were largely confined to controlled environments where electroencephalogram (EEG) systems required gel electrodes and lengthy calibration sessions to work. Even so, signal quality was too low and inconsistent for any applications beyond basic research. What has changed is not any single breakthrough, but the simultaneous maturation of a stack of technologies, as shown in Figure 16.

Engineering biology and omni compute advancements have enabled hands-free, wearable headsets that capture more consistent brain signals and transmit them safely and securely to other devices. AI has improved how those signals are interpreted and cleaned, reducing noise and variability. Meanwhile, spatial intelligence now enables more precise tracking and environment mapping, so brain activity can be accurately correlated with where people are looking or acting. Building on this, advancements in robotics now allow these interpreted brain signals to drive physical systems, where continuous closed-loop correction stabilizes movement even when brain inputs are imperfect.

Altogether, it means non-invasive BCIs can now interpret brain signals reliably enough to be useful, in form factors light enough to wear and robust enough to function in uncontrolled, real-world settings. Over time, non-invasive BCIs are likely to move beyond standalone devices and become an embedded sensing layer within broader wearable and ambient computing systems.

Figure 16: Subcomponents propelling non-invasive BCIs to their current maturity

Non-invasive BCI technology is evolving in two main directions of use. One focuses on letting people control devices by translating brain signals into commands. The other focuses on sensing brain patterns to understand a person’s state, such as focus, stress, fatigue or engagement, and adjusting devices or interfaces in response. The sensing direction is particularly relevant in highstakes operational environments where cognitive load, situational awareness and decision quality are critical constraints.

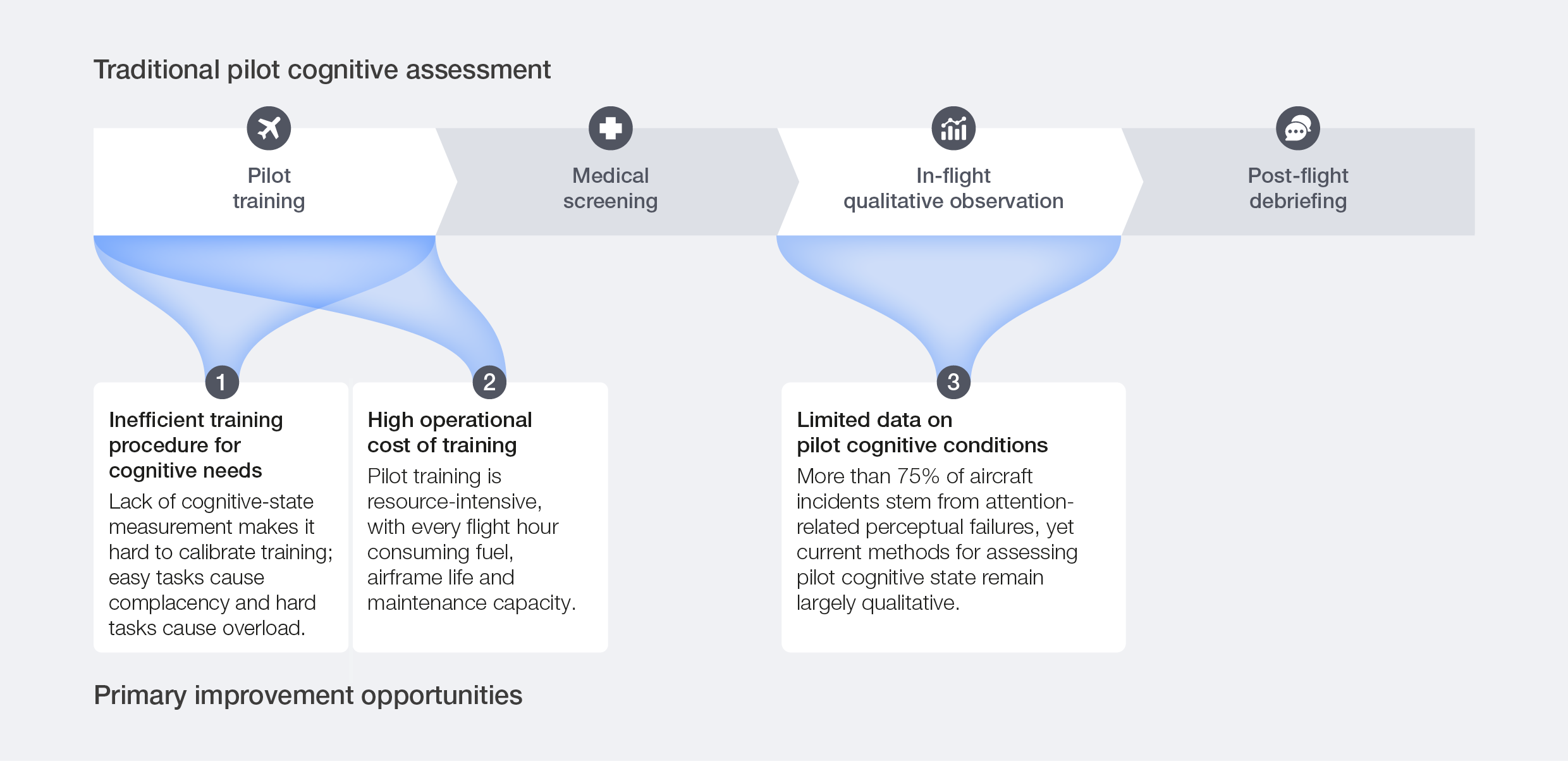

Shifting bottlenecks

Aviation represents a useful test case for noninvasive BCIs because its constraints are well documented, its training protocols are already heavily instrumented and its safety requirements demand objective, real-time measurement of the operator’s cognitive state. Although applications are expanding across consumer electronics, healthcare, gaming, automotive and other sectors, the following analysis focuses specifically on the use of non‑invasive BCIs for pilot cognitive assessment and adaptive support within this sector.

Figure 17: Traditional pilot cognitive assessment – key improvement opportunities

The development and adoption of non-invasive BCIs bring new advancements to these opportunities:

Boost cognitive understanding: BCIs enable more granular, real-time interpretation of cognitive states, enabling systems to adapt to individual physiological needs. This deeper understanding enhances human-machine collaboration and strengthens decision-making environments where cognitive load and attention are critical constraints.

Adaptive access: Non-invasive BCIs can provide reliable communication pathways for individuals with complex motor or speech impairments, expanding access to digital services and assistive technologies. By translating neural activity into actionable commands, these tools unlock new forms of autonomy, inclusion and participation in social, professional and care environments. While this application extends beyond aviation, it illustrates how the same underlying technology stack serves fundamentally different use cases depending on which constraint it targets.

Novel interfaces: BCIs enable more natural interaction by allowing users to issue commands directly through neural sensing rather than relying on explicit, repetitive actions. In augmented reality (AR)/extended reality (XR) and busy environments, this can support more seamless and context-aware interaction.

Non-invasive, BCI-adapted pilot cognitive assessment faces emerging challenges in learning efficiency, cognitive-load maintenance and limited real-time cognitive-state measurement.

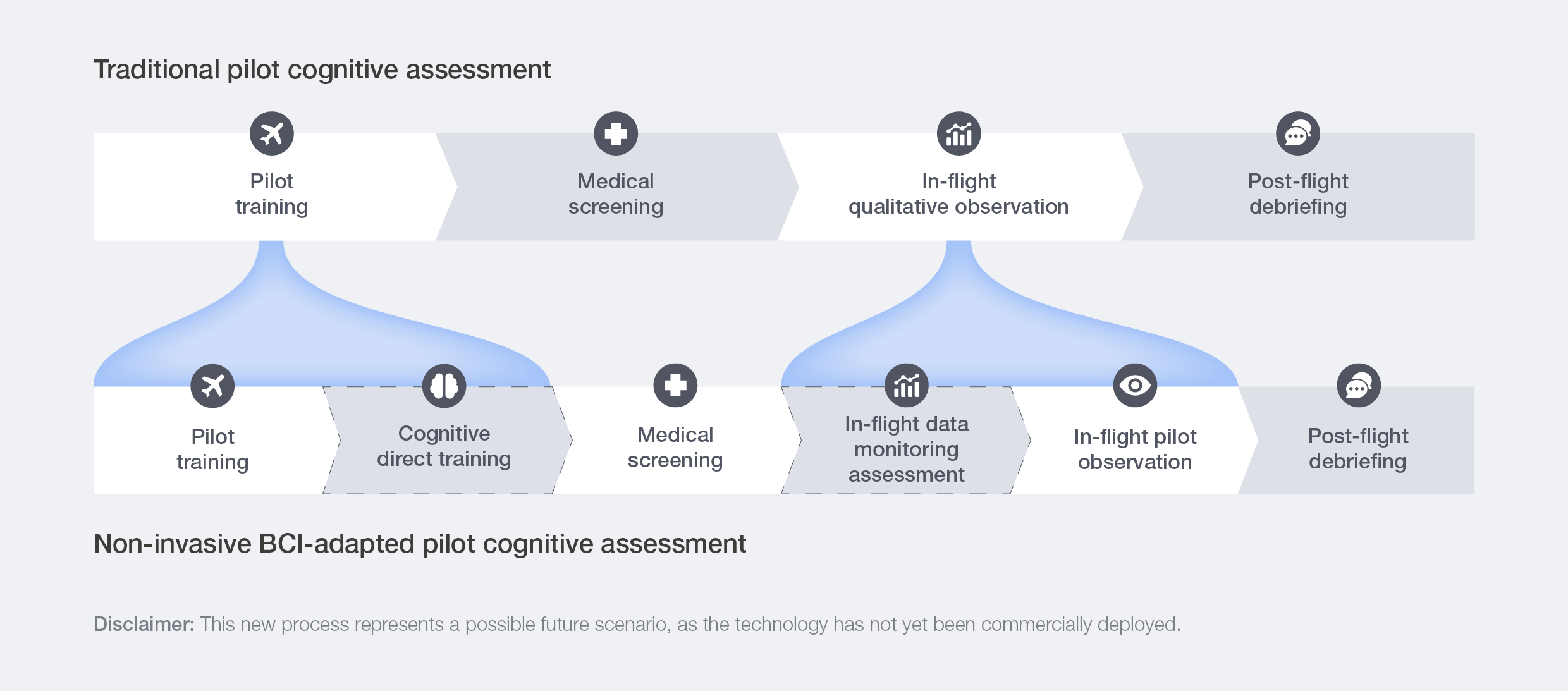

Figure 18: Adoption of non-invasive BCIs could reshape the traditional pilot cognitive assessment value chain

Integrating technologies

While full-scale deployment remains limited, several advanced pilots and research programmes demonstrate real momentum. The following use case shows how non-invasive BCIs have been integrated in practice:

Case study 7: Maintaining optimal cognitive load in flight simulation

The problem: To optimize training outcomes, the key is maintaining an optimal cognitive load range, balancing easy and difficult tasks to maximize learning efficiency. Achieving this requires real‑time, objective measures of each trainee’s cognitive state to adjust training difficulty accordingly. A Dutch initiative led by the Royal Netherlands Aerospace Centre in Amsterdam7 is exploring whether real-time brain data can support adaptive training that responds to a pilot’s cognitive state.

The action: The system uses non-invasive BCI to monitor EEG and estimate cognitive workload while pilots fly virtual reality (VR) missions. When the AI detects an overload, it reduces the mission’s difficulty. When the workload drops, it increases the challenge to keep the pilot within an optimal learning zone. A related line of research has demonstrated non-invasive BCIs for cockpit alert monitoring, using EEG to infer whether pilots have consciously registered warning signals.

The outcome: One simulated flight study integrating passive BCI with cognitive modelling reported 87% accuracy in identifying whether pilots had processed cockpit alerts,8 a capability relevant to reducing out-of-the-loop risk on the flight deck. This capability has applications beyond aerospace. The same constraint, learning efficiency under cognitive load, applies to any high-intensity, safety-critical profession that requires structured training, from surgical residency programmes to air traffic control.

Expanding value

Non-invasive BCIs help redesign pilot training and in-flight observation. The result is higher training throughput, more accurate cognitive-readiness assessment and more efficient use of instructors, simulators and training hours, all of which maximize learning efficiency for trainees.