Cognitive manipulation and AI will shape disinformation in 2026. Here's how to build resilience

Mis‑ and disinformation are among the top short‑term global risks, according to the latest Global Risks Report. Image: Florian Schmetz/Unsplash

- Advanced AI and synthetic media are driving a systemic global crisis that risks destabilizing modern democracies.

- Opportunistic actors are using psychological profiling and emotional triggers to manipulate public perception and fuel polarization.

- Building societal resilience against this requires investing in robust verification systems alongside proactive education and regulatory frameworks.

To solve big problems and navigate a chaotic world, we first need a clear, shared understanding of the facts. Today, though, technologies such as social media, online chat and artificial intelligence (AI) are allowing opportunistic actors to target people with intentionally manipulative and confusing narratives, often designed to rouse strong emotional responses, like fear, anxiety and anger.

Those spreading disinformation seek financial, political, physical and social-psychological benefits from cognitive manipulation; they shape government and business policy, how marginalized communities are treated, and whether basic human needs are met.

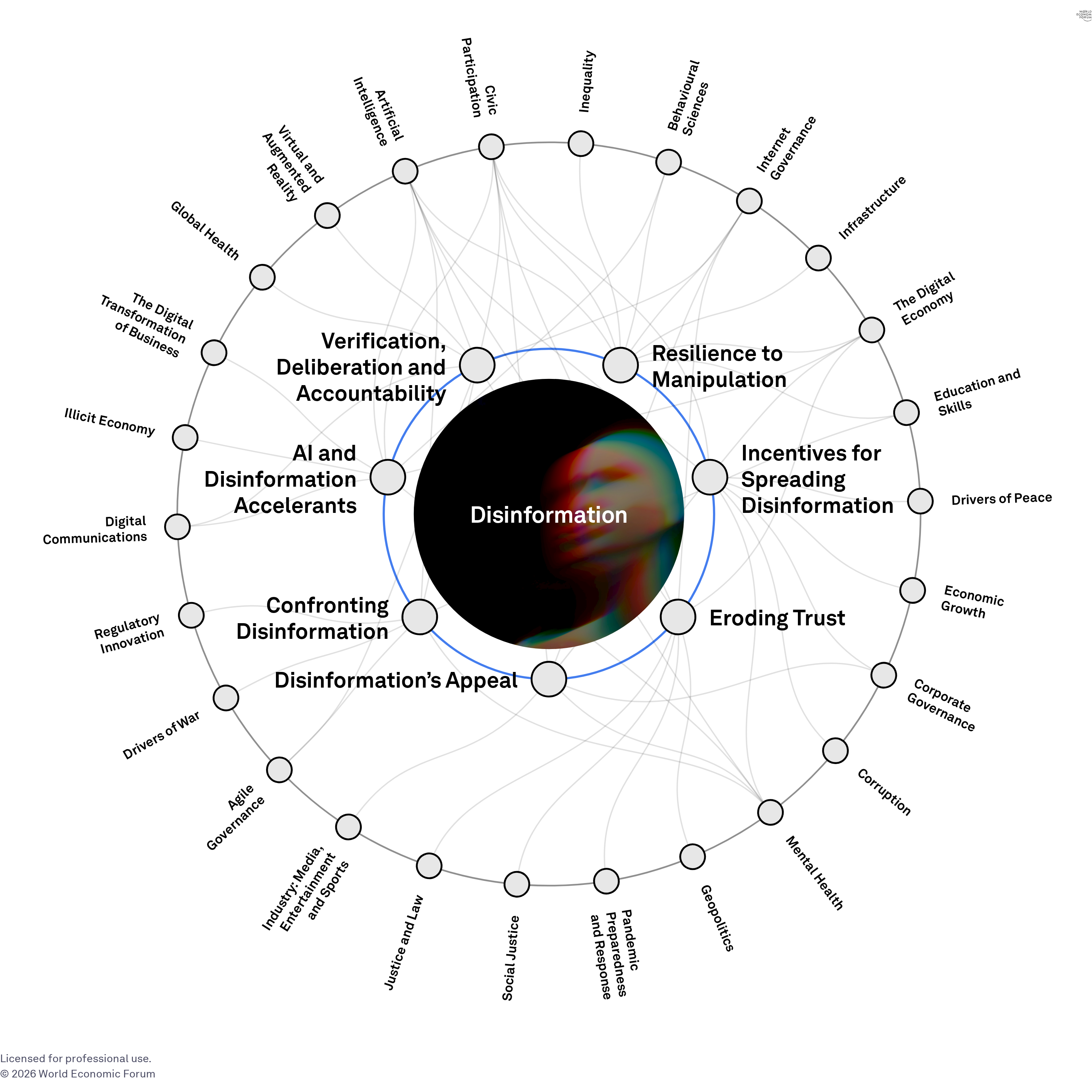

The World Economic Forum’s Global Risks Report 2026 placed mis‑ and disinformation among the top short‑term global risks, alongside geoeconomic confrontation and societal polarization. It is one of the few risks that remains severe over both the two‑ and ten‑year horizons and is seemingly the risk that catalyses or worsens all other risks on the list.

From online risk to systemic crisis

In 2026, the widespread information disorder is a destabilizing systemic force that has the potential to disrupt democracies, erode social cohesion and make existing problems worse, from economic downturns to climate-related crises.

This year offers a critical stress test. We’re facing elections that could indefinitely entrench criminal autocracies, lingering economic uncertainty, increasingly aggressive polarization and imperialism, and more sophisticated AI-mediated narratives. These dynamics are further shaped by AI-generated deepfakes that have become nearly indistinguishable from reality, making it harder to discern what is accurate and offering opportunists plausible deniability to deflect from what is true.

Whether democracies strengthen or weaken will depend on resurgence in three fundamental pillars that have collapsed with the proliferation of online technology: verification, deliberation and accountability. Communities can build resilience to disinformation if they invest in collective trust for what is true (verification), afford safe space for authentic curiosity, discussion and debate (deliberation), and hold unlawful opportunists accountable for their harms (accountability).

Emotional weaponization of information

Some AI systems and opportunistic influencers actively manipulate content to provoke strong emotional reactions, using behavioural and psychological profiling to tailor messages that appeal to or instigate a specific reaction from targeted groups. This results in content that provokes anger or fear instead of informing the reader.

Those susceptible to potential emotional manipulation can be easily identified with micro-targeting, which uses self-reported online data to reveal personality type. Once identified, targeted messaging is selected because it resonates emotionally and will likely be shared because it affirms prior beliefs, stirs up anger or resentment, or is considered humorous. This amplifies polarization and expands the reach of disinformation to broader audiences.

These effects are further exacerbated by the incentive structures of major tech media platform, whose algorithms reward engagement, often financially and with narcissistically soothing dopamine hits. As a result, outrage delivers more quickly as it triggers immediate sharing before fact-checking can occur.

Evidence from the 2024-2025 electoral cycle shows how AI systems optimized content for maximum emotional impact across multiple countries. Similarly, a study from 2024 found that young voters on TikTok were regularly exposed to misleading political content, including AI-generated and fabricated videos of political leaders. The presence of such content alongside genuine posts made it harder for users to distinguish parody from fact in fast-moving, short-form social feeds.

Synthetic media

Deepfakes have crossed a critical threshold in 2026. They have improved and eliminated earlier tell-tale glitches and are now accessible to anyone with a smartphone. With the rapid growth of generative AI models, the ways in which information can be misused has significantly evolved.

During elections in 2024 and 2025, cloned voices and visual persona deepfakes became a more prevalent risk and a live feature of democratic politics. In Ireland’s 2025 presidential election, a deepfake video falsely depicted the eventual winner withdrawing his candidature, and included fake footage of national broadcasters “confirming” the news. This was released just days before polling day. The Netherlands likewise saw roughly 400 AI-generated synthetic images used to attack political counterparts.

Evidence seems mixed as to whether deepfakes are more effective at manipulation than other forms of communication. However, there is growing evidence that deepfakes negatively affect voters’ perceptions of targeted candidates.

With 2026 densely packed with elections across continents, and particular nations at risk of irreparable democratic backsliding, the speed and scale of synthetic media is a compounding risk. In fact, just knowing deepfakes exist can make us doubt things we read and see — even the truth.

Resilience to disinformation

Disinformation has been a part of society throughout history, and will likely persist in the future. But its sophistication and degree of reach seem amplified with today’s technology. That’s why we have to build resilience — finding ways to protect ourselves from the fallout without sacrificing our fundamental right to speak our minds.

Resilience can arise from community investment in verification, deliberation and accountability systems.

Resilience can also arise from education, like in Finland, where grade school children are taught to spot and assess when they are being targeted by harmful manipulative information. More broadly, it can emerge from education that helps people independently assess six fundamental questions: who is the person trying to communicate with me; how and why did they find me; what do they gain from communicating with me; can I verify the accuracy of their message; am I at risk of being harmed if I embrace their message; and can I risk harm on others if I share the message further?

Resilience can also be strengthened through narrative inoculation, which prepares audiences for potential manipulation by exposing the opportunists’ game before it gains traction.

Growing research offers potential tools that might help improve our ability to unmask or flag false information. Deepfake detection is becoming possible through layering methods. Deepfakes often have inconsistencies — mismatched noise patterns or colour shifts in images; lip-sync errors or unnatural blinking in videos, although these are increasingly more difficult to catch. Even the latest generation deepfakes leave pixel-level markings that forensic tools can spot.

Following distribution patterns can also be helpful, where commonly malicious deepfakes spread via bot/troll networks. These are easy to track through account metadata (e.g. creation dates, posting rhythms) and behaviour. Such measures are particularly helpful around elections, financial scams, and conflict and political reporting, as they offer a more effective approach than simply relying on detection skills from individual users.

It is critical to treat disinformation as a governance and risk‑management issue, not just a content moderation task. The EU AI Act reflects this shift through its risk-tiered mandates. Article 50 requires labelling of AI-generated/deepfake content and disclosure of synthetic interactions, enforceable from August 2026 with fines up to 6% of global revenue. The Act’s emphasis on risk categorization, documentation, human oversight and post‑market monitoring provides a template for containing disinformation at scale.

The 2026 stress test

The cognitive impact of disinformation will define how societies evolve in the coming years. As the Global Risks Report 2026 highlights, disinformation exacerbates every major risk, through eroding trust and magnifying shocks from elections to economic crises. The year 2026 will test whether institutions, societies, and platforms can adapt fast enough to survive the disinformation crisis and uphold the capacity to verify, deliberate and hold unlawful opportunists accountable.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Disinformation

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Digital Trust and SafetySee all

Jovan Jovanovic

March 11, 2026