Here's how to get the $7 trillion AI hardware buildout right

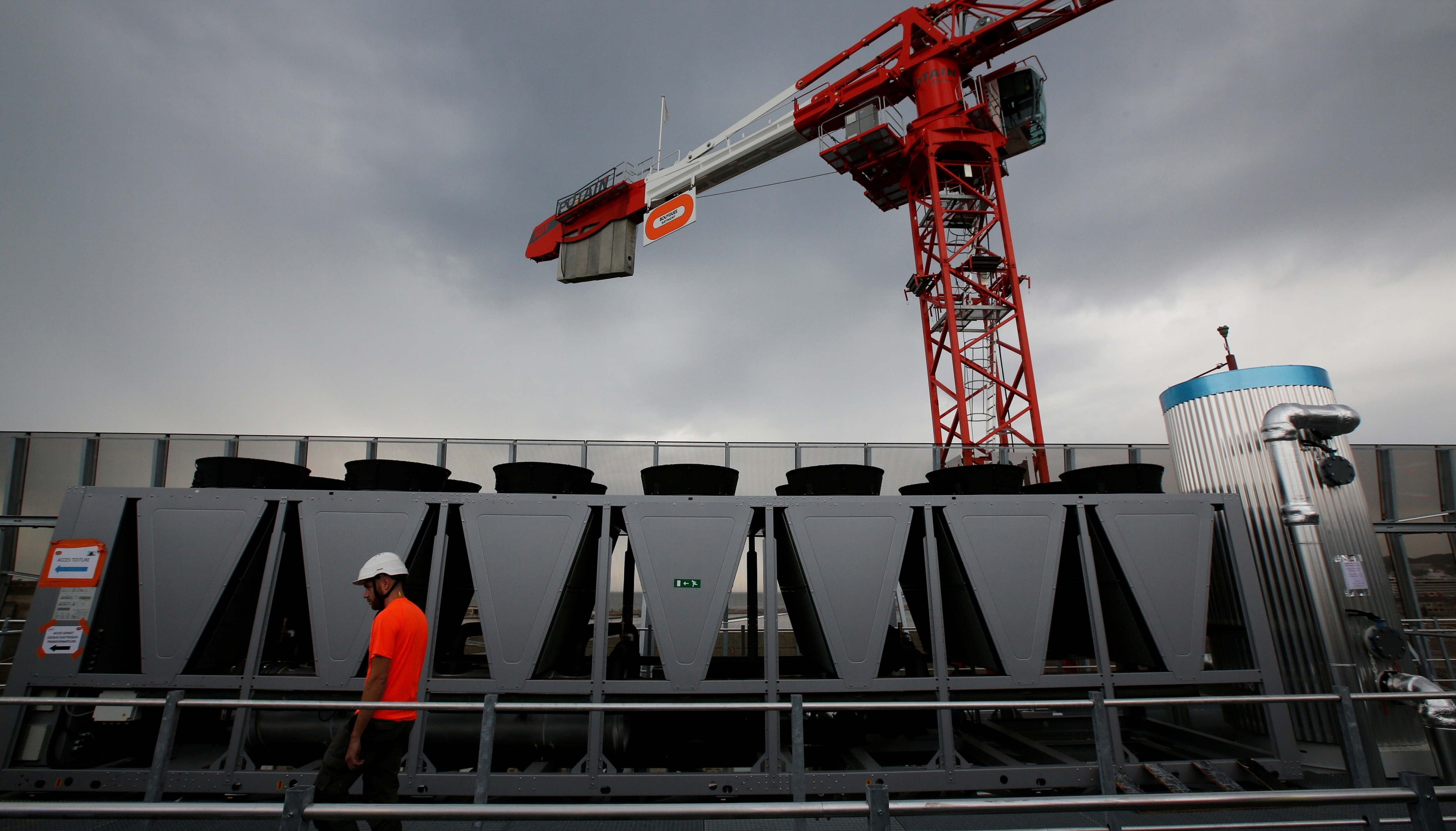

Hardware is king in the ongoing global AI buildout – and getting it right is crucial. Image: REUTERS/Jean-Paul Pelissier

Navin Chaddha

Managing Partner, Mayfield- An estimated $7 trillion in data centre investment is expected through 2030.

- Much of that is spent on cooling, power generation and other adjacent hardware.

- Getting this buildout right requires businesses, governments and international bodies focus on building coordination and cooperation into the system early.

We are in the middle of the largest physical infrastructure buildout in modern history. The choices leaders make now will determine whether this creates shared prosperity or deepens global divides.

For two decades, software ate the world, but that era is over. Chipmaker NVIDIA now stands at over $4 trillion in market cap, surpassing every software company on the planet. Hardware is reclaiming the foundation of the digital economy.

McKinsey and Company project $7 trillion in data centre investment through 2030, with $5.2 trillion dedicated to AI workloads alone. The five largest US cloud and AI infrastructure companies have committed $660-690 billion in capital expenditure for 2026, nearly double 2025 levels. Tech capex now stands at 1.9% of GDP, a figure that rivals the combined scale of the Interstate Highway System and the Apollo Program.

The buildout is global. The EU has unveiled a €200 billion AI Continent Action Plan. Saudi Arabia has committed over $40 billion to AI infrastructure. China announced a $47.5 billion state-led semiconductor fund to build domestic infrastructure and cut foreign dependencies. The Federal Reserve found that AI-related trade drove nearly half of all merchandise trade growth in the first half of 2025.

The hardware bottlenecks

AI clusters that once ran on hundreds of GPUs now demand tens of thousands. The bottlenecks slowing this shift are no longer just silicon, but include heat, power, connectivity and memory.

Heat

GPUs at full load generate thermal density that air cooling cannot handle. Liquid cooling, immersion cooling and new thermal architectures have moved from experimental to essential baseline requirements. The data centre liquid cooling market reached $6.65 billion in 2025, growing at over 20% annually.

Power

Goldman Sachs Research projects data centre power demand will rise 165% by 2030, requiring an estimated $720 billion in grid upgrades. The real limit today is not chip supply. Data centre operators report projects stalling because utilities cannot deliver sufficient power.

Connectivity

Copper interconnects cannot move data fast enough at AI scale. Silicon photonics and high-performance lasers are replacing copper, building entirely new supply chains from scratch around materials and manufacturing processes that barely existed five years ago.

Memory

High-bandwidth memory no longer functions as a commodity. GPU performance slows down due to insufficient memory throughput. Architectures that disaggregate and pool memory across clusters could improve data centre design, but they require rethinking how compute and storage work together.

Scale-up networking

Connecting GPUs within a rack differs fundamentally from connecting racks across a cluster. New architectures using custom fabrics and specialized switches can unlock step-function performance gains.

None of these bottlenecks exist in isolation. A data centre can have sufficient power but insufficient cooling capacity. It can have adequate cooling but lack the network fabric to connect GPU clusters efficiently. Each bottleneck creates an opportunity. McKinsey estimates $1.3 trillion, or 25% of the total AI investment, flows to power, cooling and infrastructure. The chips get the headlines, but the whole physical stack is what makes them run.

The economics are also under pressure. Hyperscalers are approaching negative free cash flow. AI services generate approximately $30 billion in revenue against hundreds of billions in infrastructure spend. For AI to spread the way the internet did, inference costs need to fall sharply. That requires either massive efficiency gains in how AI models run or fundamental breakthroughs in the infrastructure itself.

The right path for the AI infrastructure buildout

This pattern has played out before. In the PC era, Intel and Microsoft captured enormous value by controlling the processor and operating system. In the Internet era, Cisco powered the backbone. In the cloud era, Amazon Web Services became the utility layer. In mobile, Apple controlled the integrated stack.

Every major platform shift locks in its infrastructure layer first, then opens up application development. AI is following the same arc, but the dollars are bigger, and the geopolitical stakes are higher.

Two paths are taking shape – one of fragmentation and slowed growth, and one of coordination and collaboration – and the decisions about investment, standards and coordination made in the next 18-24 months will determine which one we are on.

In path one, competing regional blocs each build duplicated infrastructure. Standards diverge. A model trained on US infrastructure cannot easily be deployed to EU infrastructure. Developers choose which markets to serve based on infrastructure compatibility rather than market opportunity. Winner-take-all economics deepen as the regions with the largest infrastructure bases attract the most AI development. The digital gap widens, becoming structural and permanent.

This path takes shape when national governments treat AI infrastructure purely as a competitive advantage rather than a shared challenge. Each nation races to build domestic capacity, duplicating the same expensive investments in power, cooling, and networking. Business leaders, facing near-term cash flow pressure, optimize for their home markets rather than coordinating across borders. In path two, however, this is avoided.

In path two, economies agree on shared standards for AI infrastructure interoperability. Power grids are planned regionally to balance load across borders. Cooling and networking technologies are developed collaboratively, cutting duplicative R&D spend. Smaller economies plug into shared infrastructure rather than building everything domestically. Sustainability becomes achievable through coordinated investment in renewable energy. AI becomes something anyone can build on, rather than something only the largest players can access.

This path requires real commitment from three groups:

Business leaders must commit to long-term capital allocation despite uncertainty and near-term losses. Cross-industry collaboration on shared bottlenecks like cooling, power and interconnects can reduce costs and accelerate deployment. Training and reskilling workers are business imperatives.

National governments must accelerate grid permitting and investment frameworks. Public-private partnership models can share infrastructure costs and risks. Regional coordination on standards, energy sharing and supply chain resilience turns competitive buildout into a collaborative advantage.

International bodies must develop coordinated governance frameworks that prevent fragmented regional standards while respecting national sovereignty. Technology transfer mechanisms can ensure emerging economies are not locked out of the AI economy. Climate-aligned investment standards need enforcement mechanisms that work across borders.

The $7 trillion shift turns on whether we build AI infrastructure that serves shared prosperity or concentrates power in the hands of a few.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Artificial Intelligence

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Artificial IntelligenceSee all

Keith E. Ferrazzi and Wendy Smith

April 9, 2026