Voice tech and the question of trust

Image: Andres Urena/Unsplash

Voice technology is becoming more ubiquitous, integrating into tools and services by major corporations, startups, governments, and public sector players.

But if these tools are to gain broad public acceptance, these actors will need to demonstrate that they are being used in a trustworthy manner, with respect for human rights.

Big tech companies clearly see the value, and they continue to develop new abilities when it comes to collecting voice data for advertising and product recommendations. Tech titan patent activity reflects this, including new Amazon patent filings describing how a “voice sniffer algorithm” could be used on a variety of different devices to analyze audio in near-real time when it hears words like “love,” “bought,” or “dislike.” Google has patent applications describing how audio and visual signals can be used in a smart home context. This may seem harmless and even helpful in simplifying everyday tasks, but it also has serious implications for access to and use of personal data.

According to a 2018 PwC report, of the 25% of Americans who have no plans to shop with their voice in the future, more than 90% said lack of trust was a factor. These statistics find support and reinforcement in widely publicized examples of ways the technology has been used contrary to personal expectations. For example, a story of the recording of a private conversation between spouses that was sent to the husband’s employee was covered in national news outlets. While most experts predict a positive growth trajectory for virtual assistants, this trust gap will likely hinder truly universal adoption.

Three foundational issues sit at the heart of the issue of trustworthiness and voice tech and will need to be addressed: data protection and transparency, privacy, and fraud.

There is a significant body of data associated with the development and use of voice technology. While the words spoken by a person to the interface may be the most obvious type of information being collected, analyzed, and (possibly) stored, there are several other categories that should be considered, including background noise captured, metadata, biometric data and voice prints, derivative data (for example, emotional information that can be determined based on the tone and pitch of the voice), not to mention the body of data collected to develop and train the voice algorithm.

The key principles of data protection have been long-recognized and are embodied in the Fair Information Practices. Legal frameworks around the world, such as the EU’s General Data Protection Regulation (GDPR), have codified and expanded upon these principles, often with severe penalties for non-compliance. Some jurisdictions, including the U.S. State of Illinois, have even pursued laws specifically covering biometric information, such as voiceprints. These frameworks provide a baseline level of protection for people who interact with voice-driven tools, though it is often not enough.

To compliment these legal requirements, professionals in the field of privacy engineering have been developing practical approaches to incorporating data protection into tools and services. Additionally, the U.S. National Institute for Standards and Technologies (NIST) is currently developing a privacy framework that is likely to extend beyond notions of legal compliance and provide a common language for approaching and mitigating potential threats associated with the increased collection of information, including voice information.

By building tools in a way that respects “privacy by design,” entities can make sure they are appropriately considering data protection from the outset. One example is the incorporation of blockchain as a potential decentralized solution for voice data protection, and French startup Snips is leaning into this idea of decentralization by building its software to run 100% on-device, independently from the cloud. Unlike more mainstream, centralized virtual assistants, Snips knows nothing about its users.

The more data collected through the use of voice technologies, the more risk information could be disclosed to governments or compromised by bad actors—a particularly ominous notion when voice data-specific human rights have yet to be defined.

“To the extent platforms store biometrics, they are vulnerable to government demands for access and disclosure,” noted Albert Gidari, director of privacy at the Stanford Center for Internet and Society. What government actors can then do with that information is potentially boundless. One example is the United States National Security Agency (NSA), where it was recently revealed that technology exists to analyze the physical and behavioral features that make each person’s voice distinctive, such as the pitch, shape of the mouth, and length of the larynx. An algorithm creates a dynamic computer model of the individual’s vocal characteristics--their voiceprint.

Globally, similar discussions are taking place at the government level. HMRC, the United Kingdom’s tax authority, has collected 5.1 million taxpayers’ voiceprints as part of its new voice identification policy. Advocacy groups reported HMRC’s system repeatedly asks users to create their “voice ID” without giving them instructions on how to opt out. In China, the government has been using voice data in conjunction with other biometric data and tracking technologies to target and surveil minority communities.

The existence of this data also provides a new attack surface for bad actors. With major data breaches in the news every week, the threat of unauthorized access to databases of voice data could provoke immeasurable damage, particularly as companies are increasingly turning to this type of biometric data to authenticate users for access to their personal accounts.

International law and policy are starting to take shape to provide guidance in these areas. The Necessary and Proportionate principles provide a rights-based framework to limit government access to personal information, though few countries have yet to adopt it. The Digital Geneva Convention is currently a draft private sector effort, while public sector forces are urging the UN Human Rights Committee to issue a new General Comment on the right to privacy under Article 17 of the International Covenant on Civil and Political Rights (ICCPR) and the Budapest Convention on Cybercrime is keenly focused on the topic.

It is clear there is a need for more and better national surveillance and cybersecurity laws and policies to help codify protections for these categories of personal data, including biometric information.

Deep fakes and fraud are already surging problems in the world of online video, and U.S. lawmakers are already sounding the alarm about similar issues in the audio space.

Fraud is a growing problem in voice technology, with voice fraud rates growing more than 350% since 2013 across a variety of industries. Today, the same deep-learning technology that enables the modification of videos without detection can also be used to create audio clips that sound like real people.

Big technology firms all over the world are developing technology to clone anyone’s voice, such as the “Deep Voice” AI algorithm developed by Chinese firm Baidu that can clone anyone’s voice in just 3.7 seconds. Over the course of a year, the technology went from requiring about 30 minutes to create a new fake video to, now, just seconds.

Meanwhile, this need to fight fraud is leading to new innovation. Pindrop, for example, is a startup that recently raised $90M to bring its voice-fraud protection to IoT devices and the European continent. They claim their platform can identify even the most sophisticated fakes by analyzing almost 1,400 acoustic attributes, which enables it to effectively verify a legitimate voice file.

Academia is also leaning in to help prevent fraud. MIT Technology Review reported Berkeley researchers are able to “take a waveform and add a layer of noise that fools DeepSpeech, a state-of-the-art speech-to-text AI, every time. The technique can make music sound like arbitrary speech to the AI, or obscure voices so they aren’t transcribed.”

There are steps that can be taken to ensure privacy in voice-driven platforms, provide data security on the backend, and fight the risk of fraud. This means increasing access to information and education to help inform the general public, perhaps including new curriculum additions to global school systems. It also means meaningful legislation and genuine public/private sector collaboration.

This article was written on behalf of the Global Future Council on Consumption.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

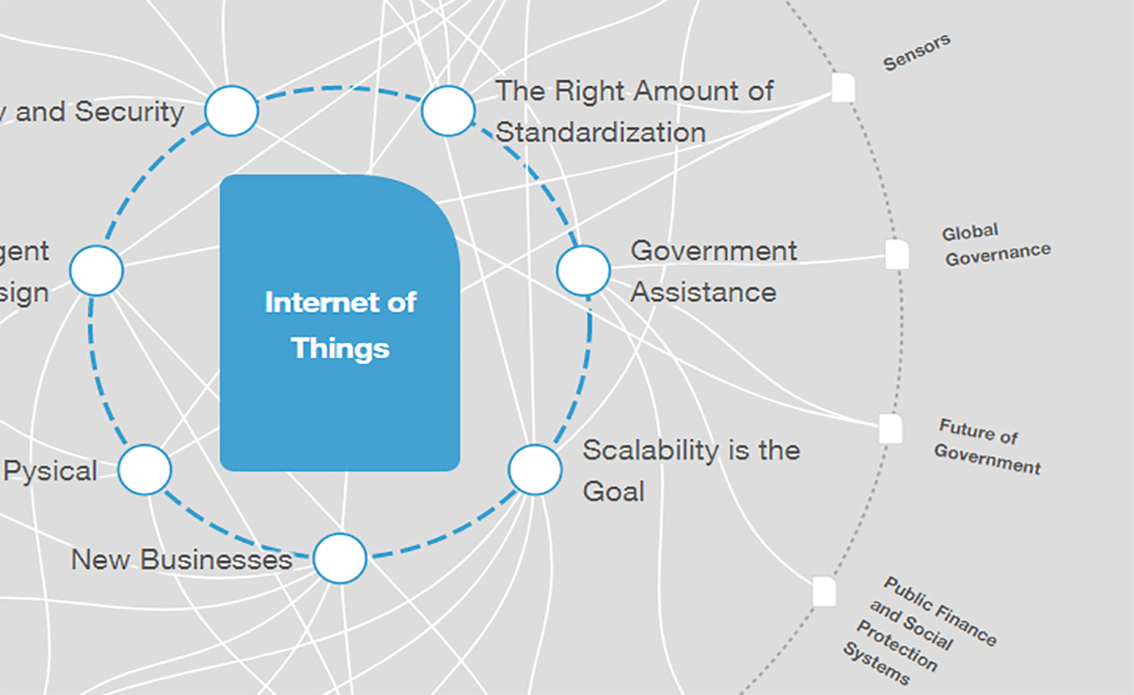

Internet of Things

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Emerging TechnologiesSee all

Dr Gideon Lapidoth and Madeleine North

November 17, 2025