Governments must build trust in AI to fight COVID-19 – Here’s how they can do it

Coronavirus has spurred efforts to use AI to prevent infections. Image: CDC illustration via REUTERS

- Artificial intelligence has been an important tool in tracking and tracing contacts during COVID-19.

- As the crisis continues, citizens might be more willing than ever to forgo their civil liberties and data protections to fight coronavirus.

- To maintain trust, governments must put much-needed AI governance architectures in place.

AI has become a key weapon in tracking and tracing cases during this pandemic. Deploying those technologies has sometimes meant balancing the need to conquer the virus with the conflicting need to protect individual privacy. As the initial crisis gives way to long-term policies and public health practices, governments will need to build trust in AI to ensure future protections can be deployed and maintained.

AI’s surveillance superpowers are being used to help break the chains of viral transmission across the globe. Russia, for instance, maintains COVID-19 quarantines through large-scale monitoring of citizens with CCTV cameras and facial recognition.

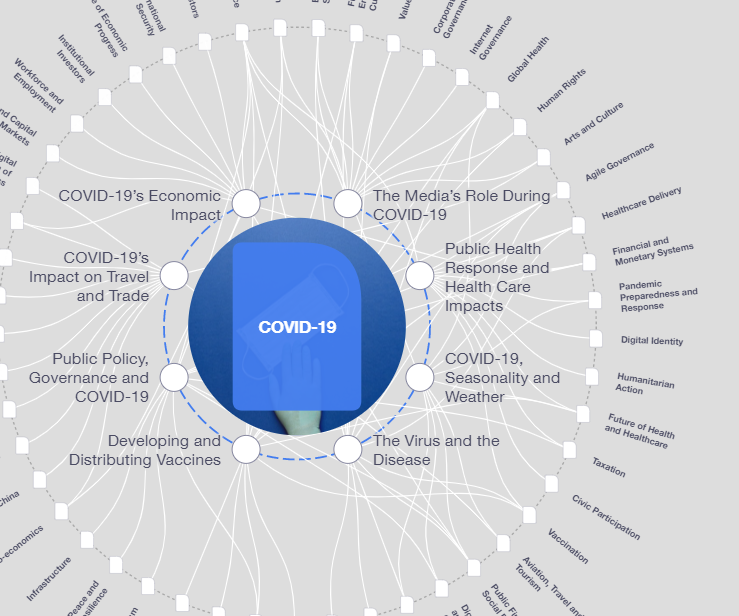

What is the World Economic Forum doing about the coronavirus outbreak?

China is using AI-powered drones and robots to detect population movement and social gatherings, and to identify individuals with a fever or who aren’t wearing masks.

Meanwhile, Israel is using AI-driven contact tracing algorithms to send citizens personalised text messages, instructing them to isolate after being near someone with a positive diagnosis.

The fuel for much of this life-saving AI is personal data. In fact, South Korea’s high-octane blend of data from credit card payments, mobile location, CCTV, facial scans, temperature monitors and medical records has been a key part of a broader strategy to trace contacts, test aggressively and enforce targeted lockdowns. The combination of these effects has helped the country flatten its curve. Late into its outbreak, the country still had not suffered more than eight deaths on any one day.

Despite these benefits, we must still approach privacy seriously, carefully and pragmatically, even as citizens might be more willing than ever to forgo their civil liberties and data protection regulators begrudgingly concede that extraordinary times can outweigh even the strongest of privacy rights.

Where privacy is curtailed, it’s important that all dimensions of AI ethics are considered to maintain public trust in its use over the medium to long-term. If organizations hope to ensure the public’s continued participation, they must ensure the data being willingly offered in the spirit of offering a social good is treated with the utmost responsibility.

As a vaccine is at least 18 months away, long-term solutions will be needed that assist with tracking efforts while preserving public trust and cooperation.

"Where privacy is curtailed, it’s important that all dimensions of AI ethics are considered to maintain public trust in its use over the medium to long-term."

”

To be sure, some governments are working with Telecoms and Big Tech to access aggregate anonymised location data showing trends of movement. Additionally, Google and Apple recently agreed to an unprecedented cooperation to allow anonymous (and voluntary) global contact tracing.

Still, opt-in initiatives can create gaps and vulnerabilities. For instance, Singapore reported over one million people had downloaded its TraceTogether app. However, at least 75% of the country’s 5.5 million population need to sign up for the app to be effective.

Governments must put in place appropriate AI governance architectures that enable the creation long-term solutions to conquer COVID-19 and other potential health crises. These include:

- Time limits. Use of personal data should be time limited to the duration of this crisis and then deleted.

- Use limits. Use of personal data should be restricted to specific and limited use cases such as contact tracing or quarantine enforcement.

- Fairness and inclusiveness. AI systems must treat all citizens equally regardless of gender, ethnicity and other protected classes. This requires governments to be extremely conscious of what datasets are feeding their algorithms. All citizens’ data needs to be included, especially as minority groups may be disproportionately impacted by Covid-19.

- Transparency. The types of personal data being used and for what purpose must be (over) communicated to citizens to build trust. AI decision-making algorithms should only be used with clear explanations of their rationale.

- Accountability. Use of personal data and AI should be accountable to named and visible figures in government and their agencies.

- Oversight. There should be oversight by both parliamentary and independent bodies to ensure the implementation of responsible AI.

These are extraordinary times that call for extraordinary measures, yes. But governments and businesses must learn how to manage privacy and trust to help fight this crisis in the months ahead and other public health crises to come.

Appropriate ethical AI architecture can ensure that we leverage the best that AI can offer to the present situation without exploiting an anxious public’s desire to find fast solutions. Good AI governance was needed long before COVID-19 arrived. Now, it’s that much more critical.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

COVID-19

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Emerging TechnologiesSee all

Dr Gideon Lapidoth and Madeleine North

November 17, 2025