How to design safer digital systems for children in the age of AI

Children and young people are living at the frontier of the AI-driven internet. Image: REUTERS/Carlos Osorio

Agustina Callegari

Initiatives Lead, Technology Governance, Safety and International Cooperation, World Economic Forum- GenAI is accelerating the panoply of digital threats faced by children.

- Governments, including the United Kingdom, Australia, Singapore and Spain, are responding with a wave of online safety measures, including stronger duties for platforms and debates over age assurance.

- Children and young people must be included as partners in designing regulation so that the rules, education and protections reflect the realities of how they live, learn and connect online.

AI tools are becoming part of the everyday fabric for children whose lives are already deeply digital, with gaming, social media, messaging and schooling all running through online platforms.

Pew Research has found that 64% of teens say they use an AI chatbot, while three in 10 say they use one every day. In addition to chatbots, there are also AI features embedded in the apps and feeds they already rely on directly, such as tools that generate bedtime stories for the youngest, or browser extensions that surfaces answers in online quizzes.

That speed of uptake matters, because safeguards, literacy and governance are lagging. Children are still developing critical thinking, impulse control and social identity, and they have less ability to understand opaque systems, persuasive design, or data practices. AI can amplify familiar online harms of harassment, impersonation and exploitation while also introducing new risks, from realistic synthetic media to automated grooming and AI companions that blur healthy boundaries.

Australia's landmark teen social media ban towards the end of last year caught the headlines, but other governments, including the United Kingdom, Singapore and Spain, have responded with a wave of online safety measures, including stronger duties for platforms and debates over age assurance. The topic was also high on the agenda at the World Economic Forum Annual Meeting 2026, where leaders discussed youth well-being in a hyper-connected world.

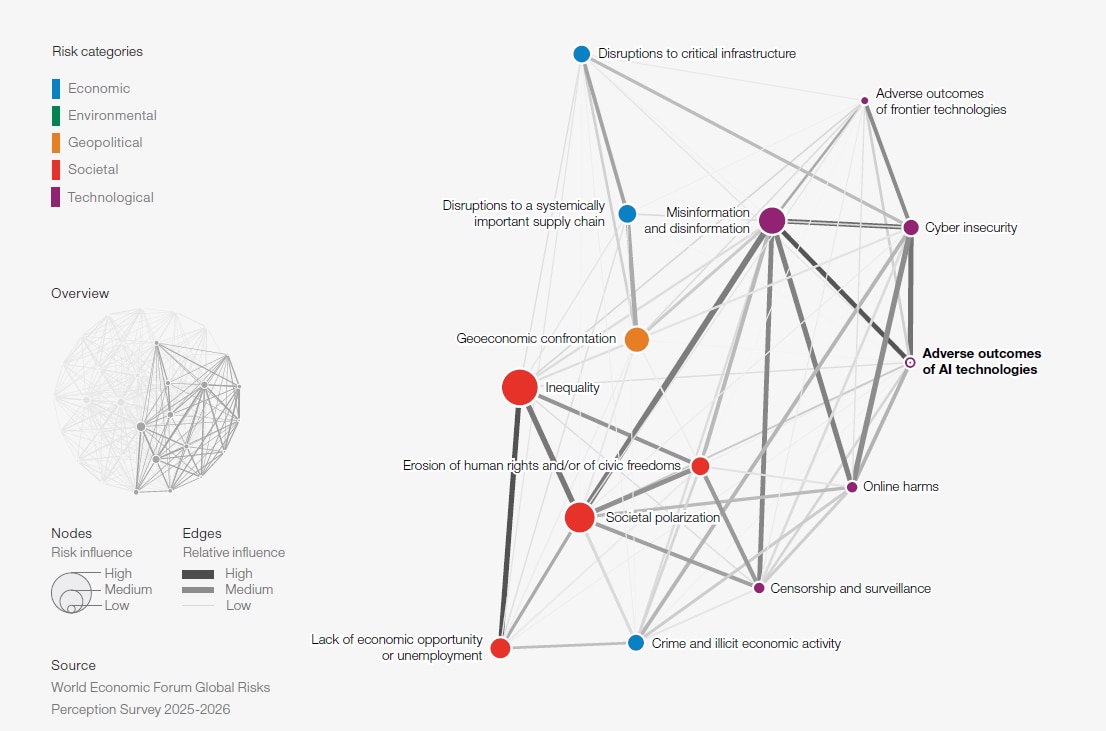

The Global Risks Report 2026 highlights escalating technology-related risks, with online harms ranked #12 over the next two years and the adverse outcomes of AI technologies ranked #5 over the next 10 years. The message is simple: Child online safety in the age of AI is a top priority.

When AI supercharges online harms

Trust and safety teams have long battled a familiar set of online harms affecting children and teens, such as cyberbullying and harassment, grooming and enticement, child sexual abuse material (CSAM), hate and extremism, scams, and exposure to self-harm or sexual content. What has changed is the speed, scale and realism AI can add.

What once required technical expertise can now be done by individuals using widely available tools. Generative AI can be used to create highly realistic imagery, lowering the barrier to producing and distributing illegal content and increasing the volume of content that moderators and investigators must review.

The scale of the shift is already visible. The Internet Watch Foundation (IWF) reported a 26,362% rise in photorealistic AI videos of child sexual abuse, often including real and recognizable child victims in 2025, and warned that much of this material is extreme in nature. Law enforcement agencies are also responding to AI-generated CSAM distribution networks; however, synthetic CSAM can make it harder to identify real victims, trace original source material, and prioritize urgent rescue cases – complicating investigations even when the content is fully AI-generated.

Data shows also a sharp growth in online enticement and financial sextortion. AI-enabled “nudification” tools and deepfake nudes are turning ordinary photos into sexual imagery, intensifying harassment and humiliation, often in school contexts and targeting girls. AI makes these dynamics more scalable, from peer impersonation to explicit imagery fabrication.

AI-native risks

AI systems are designed to be fluent, fast and helpful, which are traits that can look like authority. With the risk of hallucinations, oversimplifications or reproduced bias, children may be vulnerable to over-trusting conversational AI and may miss limitations like empathy gaps or subtle inaccuracies. For children still developing literacy and critical thinking, confident-but-wrong AI outputs can misinform learning, shape beliefs, and distort decision-making, especially when AI is treated as a tutor, search engine, or trusted friend.

A major shift is the rise of AI companions that are explicitly social friends, romantic partners, role-play characters, and pseudo-therapeutic chatbots. Emotional dependence on companion chatbots is becoming a growing risk. AI companions can be a dangerous mix for youth, especially when age verification is weak and systems are optimized for engagement.

Cases of harmful chatbot interactions have been linked to teen crises, including suicides in some extreme cases, and model changes have sometimes triggered feelings of loss akin to losing a friend. This underscores the need for stronger safeguards, effective crisis-response protocols, and clearer accountability for products used by minors, especially when those products can shape behaviour and foster strong attachments.

Beyond accelerating existing harms, AI introduces risks rooted in how these systems are designed and experienced. Children disclose sensitive details more readily when the interaction feels conversational and nonjudgmental. Unlike a web search, chat can become a diary that may contain private details, including information about mental health, location patterns, and schooling. This risk grows when AI is embedded across platforms, and when interactions may be stored, analyzed, or used to refine systems.

Preventing online harm

Children and young people are living at the frontier of the AI-driven internet, yet too often they are asked to navigate systems built without them in mind. Keeping them safe and enabling them to benefit from digital tools cannot fall to parents, teachers, or trust and safety teams alone.

Leading organizations are driving practical interventions, such as the Internet Watch Foundation supporting identification and removal of child sexual abuse material; the 5Rights Foundation promoting child-centred digital design; and the Tech Coalition bringing companies together to strengthen prevention, detection, reporting and cross-platform coordination against online child sexual exploitation.

Major platforms and technology firms are also investing in safety tooling and safer-by-default experiences such as image hashing and detection technologies, parental supervision tools, and teen protections. Meanwhile, regulators are increasingly expecting stronger duty-of-care approaches, age-appropriate design, and credible age assurance. Together, these efforts are moving toward building digital systems that are safer for children as AI becomes embedded across everyday online life.

The World Economic Forum's work includes a 2019 workshop report on Establishing Global Standards for Children and AI, and the 2021 launch of the Global Coalition for Digital Safety that advanced shared frameworks such as Global Principles on Digital Safety and a Typology of Online Harms to help align efforts across countries and industries.

A recent Forum paper, The Intervention Journey: A Roadmap to Effective Digital Safety Measures proposes a detailed plan for how to implement digital safety interventions. As part of this, the report advises identifying risks, designing tailored safety measures, continuously evaluating effectiveness and collaborating by sharing expertise and resources.

How the Forum helps leaders make sense of AI and collaborate on responsible innovation

Digital safety for children is a whole-of-society challenge that demands collaboration. Businesses, governments, civil society and academia each have a role in ensuring AI and online services are safe by design, backed by credible safeguards, clear accountability, and evidence-based approaches that keep pace with evolving risks. Just as important, children and young people must be included as partners, so that the rules, education and protections reflect the realities of how they live, learn and connect online.

Acting together can can build a digital world where AI does not erode trust or well-being, but instead expands learning, creativity and opportunity for every child.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Cybersecurity

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Artificial IntelligenceSee all

Mark Esposito

March 18, 2026