Is data a danger to the developing world?

We are witnessing an enormous increase in the capacity and use of large-scale data around the world. Where data was once expensive and slow to collect, it can now be passively and opportunistically collected at extraordinary scale, and reused in many contexts by different groups. There is considerable potential for the use of big data in the developing world, but there are also serious risks.

How are we to assess the potential harms of releasing call records in a country experiencing sectarian violence or civil war? What about data from countries where journalists are being arrested? What if vulnerable populations are exposed? The more data we use, the more it challenges our ability to assess its human effects, now and in the future. As with other scientific and technical fields, one way we understand and evaluate these effects is through the lens of ethics.

Traditionally, ethical questions have asked whether the advances we make can be justified in light of the potential harms they might inflict on the humans they involve. For example, in medical testing, there are established codes of ethics for balancing the benefits of testing a potential new cure against the risks that experimental treatments might inflict pain, suffering or even death on the recipients. The principle of “do not harm” guides these studies and interventions.

When dealing with data, scientists have often struggled to account for the risks and harms using it might inflict. One primary concern has been privacy – the disclosure of sensitive data about individuals, either directly to the public or indirectly from anonymised data sets through computational processes of re-identification.

But big data presents a whole new series of ethical challenges. Privacy continues to be an important concept, but it is too narrow to fully encapsulate the potential harms – short and long term – of how data can be used to analyse human behaviour. Given the power to classify and quantify human life, big data brings new risks: data discrimination can cause individuals to be deprived of basic rights such as housing, employment, healthcare or education because computational judgements were made about them without any accountability or due process.

We also risk increasing power and wealth asymmetries between those in charge of the data and those who are subjected to actions based on it. In these situations, we need to consider how the value of data is shared, how communities get to participate in the construction and operation of data projects, and what policies govern its use. We also need to ensure that we build capacity and skills in the uses of data among communities where data is being harvested and analysed.

New data ethics need to be based on the principles of respect, autonomy and agency. That means giving local communities and affected individuals the opportunity to understand the intentions of those using the data, the ability to co-create those interventions and the means to audit where their data is going and who has access to it. They need to be able to opt out wherever possible.

It also means sharing our stories about the ethical dilemmas we have faced in big data projects, and how we resolved them. Currently, the National Science Foundation’s Council for Big Data, Ethics and Society is calling for case studies based on real-world examples from industry, government and academia that examine complex issues of data ethics.

We’d also like to share case studies at the World Economic Forum’s Summit on the Global Agenda 2015 in Abu Dhabi.

The Summit on the Global Agenda 2015 takes place in Abu Dhabi from 25-27 October

Author: Kate Crawford is a Principal Researcher at Microsoft Research, a Visiting Professor at MIT’s Center for Civic Media, and a Senior Fellow at NYU’s Information Law Institute. Member of the World Economic Forum’s Global Agenda Council on Data-Driven Development.

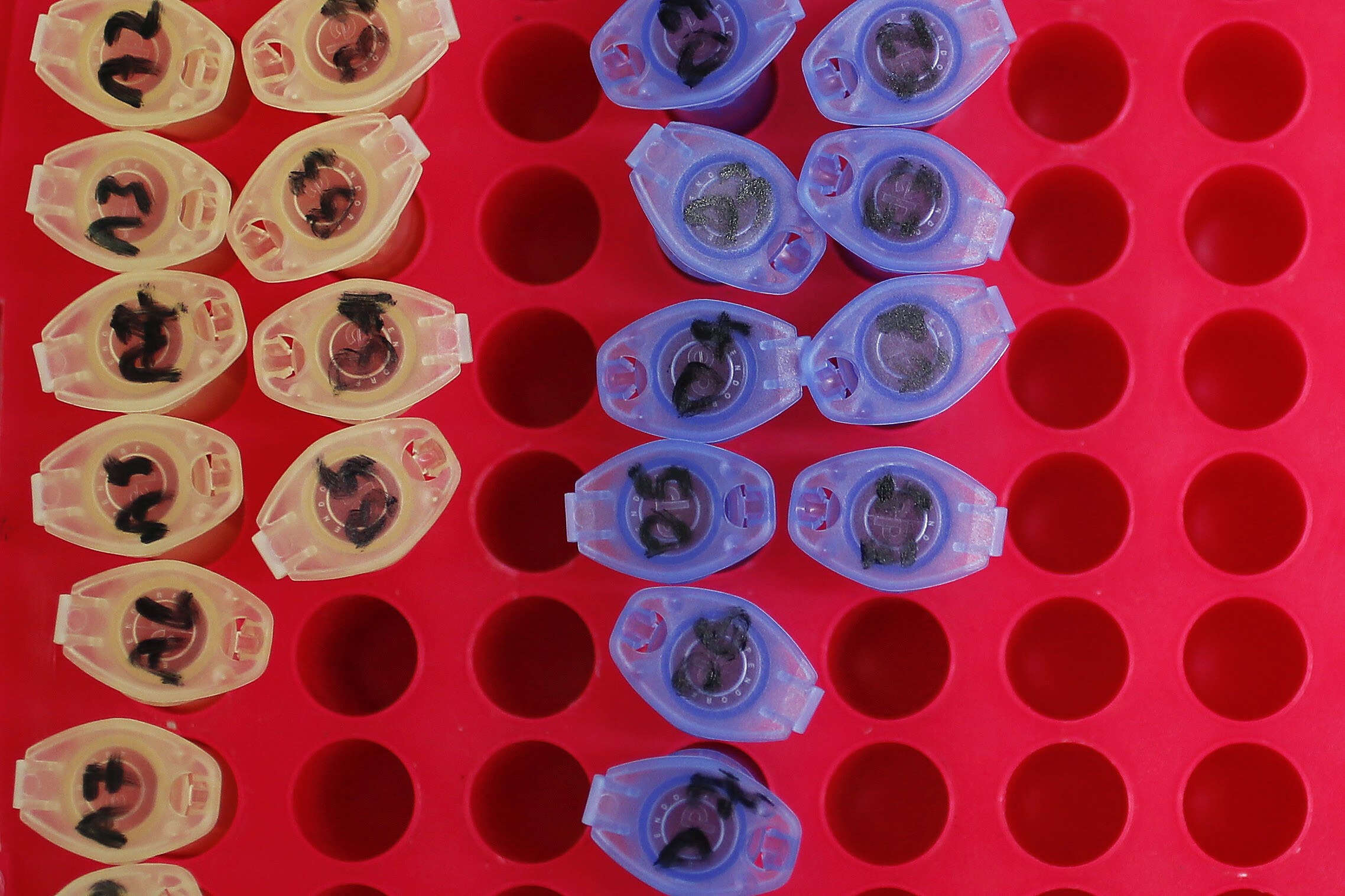

Image: REUTERS/Robert Galbraith

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Media, Entertainment and Sport

Related topics:

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.