This brand new prosthetic arm means this amputee can play the piano again

A musician is now able to play the piano after having his arm amputated thanks to a new prosthetic. Image: REUTERS/Kevin Lamarque

Prosthetic functionality is no longer science fiction

When Jason Barnes was electrocuted in 2012, doctors were forced to amputate his arm from the elbow down. As a musician, the loss of his right arm must have certainly traumatized and saddened beyond any simple repair.

Two years later, Gil Weinberg, a professor at the Georgia Tech College of Design, and his lab developed a new prosthetic for Barnes that enabled him to play one of his favorite instruments: the drums. The prosthetic arm was equipped with a pair of drumsticks — one controlled by Barnes himself, while the other moved on it’s own and improvised it’s movements based on the music it heard nearby.

Barnes used to also play the piano, but a majority of prosthetic arms available are as of yet unable to provide the level of dexterity required to play such a complex instrument. So after creating the drumstick prosthetic, Weinberg set out to create another device that would enable Barnes to play the piano once again, and he took some inspiration from Luke Skywalker’s own robotic limb. A source the Defense Advanced Research Projects Agency (DARPA) was inspired by as well.

Despite the amputation of his arm, Barnes still had the muscles required to control his fingers. The problem was the electromyogram (EMG) sensors used in most prosthetic limbs are inaccurate, meaning Weinberg and his team had to find another approach.

“We tried to improve the pattern detection from EMG for Jason but couldn’t get finger-by-finger control,” explained Weinberg. That’s when the team incorporated an ultrasound machine. Working together with other Georgia Tech professors – Minoru Shinohara, Chris Fink, and Levent Degertekin — they attached an ultrasound probe to Barnes’ everyday prosthetic arm.

As explained by Georgia Tech, the muscles movements seen when Barnes tries to move his amputated ring finger are different from those seen when he tries to move any other finger. Using this information, Weinberg and his team fed the unique muscle movements for each finger into an algorithm that’s able to determine which finger Barnes wants to move. Used in combination, the ultrasound signals and machine learning can detect the movements of each finger, as well as how much force he wants to use.

Now, 5 years later, he’s able to play the piano again.

“It’s completely mind-blowing,” said Barnes. “This new arm allows me to do whatever grip I want, on the fly, without changing modes or pressing a button. I never thought we’d be able to do this.”

Practical applications

Incredibly, Weinberg believes the technology used for Barnes’ new arm can also be used for more than music. One day, according to the professor, it could be used to help people with tasks “such as bathing, grooming and feeding.” Considering how it was successful enough to provide enough dexterity for individual fingers to hold a melody on the piano, there’s no reason why it couldn’t enable someone to type on a keyboard as well. Or use a smartphone, play video games, et al.

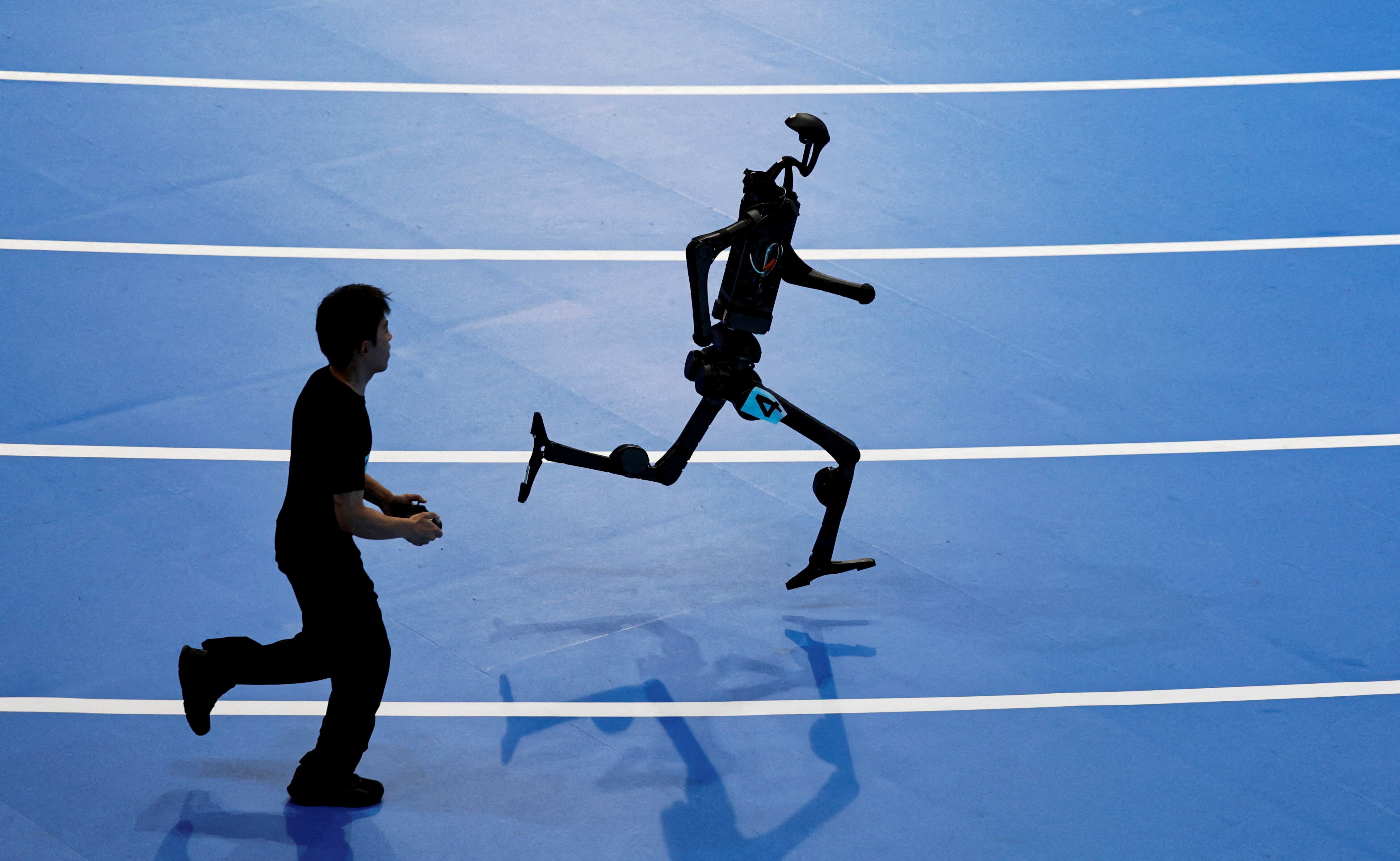

The potential doesn’t stop there, as Weinberg imagines a time in which even able-bodied people can remotely control robotic limbs by moving their fingers. This is starting to sound like the cyborg character Molly Millions from William Gibson’s“Neuromancer.”

However, given the current concerns about automation displacing millions of workers, creating jobs that allow people to still be “hands-on,” but from a distance and out of harm’s way, may ease the transition, if only a little. It could also give birth to an emergent harmony, wherein humans, artificial intelligence, and robotics work together for the betterment of all, instead of the artificial replacing the biological.

One thing is certain: robotics and AI are going to enhance our own capabilities in a multitude of ways, and we’re on the verge of embracing them completely. As Boston Dynamics CEO Marc Raibert put it: “When we have robots that can do what people and animals do, they will be incredibly useful.”

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Innovation

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Technological InnovationSee all

Aliaksei Patonia and Henry Rushton

April 16, 2026