Why we need cybersecurity of AI: ethics and responsible innovation

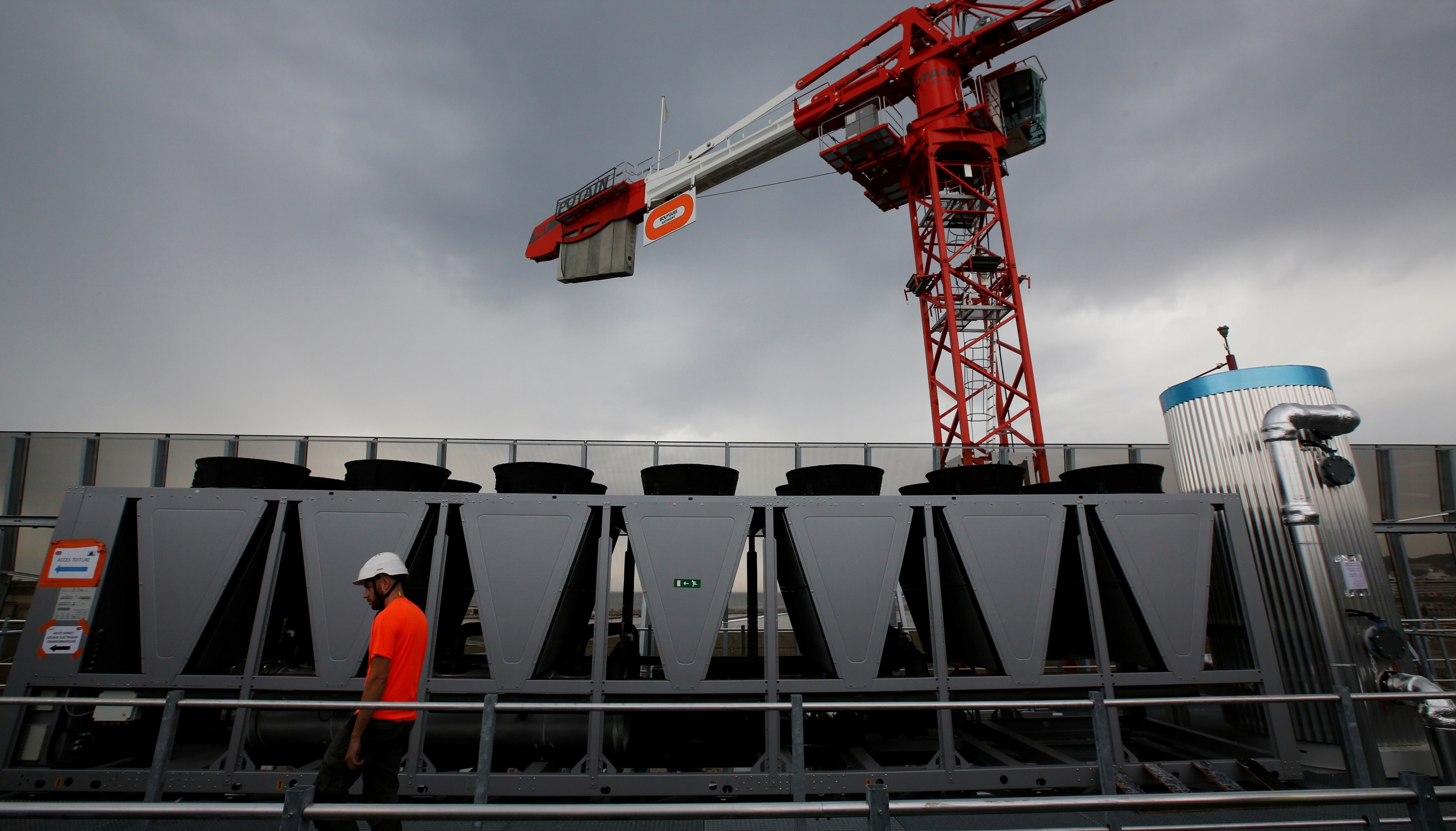

Cybersecurity needs to be applied to AI. Image: Getty Images/iStockphoto

- There are now many new AI-specific risks.

- Whenever we observe a new technology trend, we can expect harm potential to arise and opportunities for threats to monetize our use of technology.

- This blog is part of an Agenda series from the AI Governance Alliance, which advocates for responsible global design, development and deployment of inclusive AI systems.

With the growing use of advanced deep machine learning, AI must be deployed alongside a responsibility for ensuring the integrity, safety and security of such systems.

New risks

There has been much debate and discussion about new AI-specific risks. A key example of this is the potential for a lack of fairness or the presence of bias in systems utilizing AI. This is based on their initial training data or on how we maintain the AI model as the system evolves and learns from its environment. Other concerns surround a lack of ability to scrutinize, check the integrity of systems and maintain an alignment with our value sets – often due to challenges in understanding how the AI system reached its outputs, exasperated in situations where outputs are unreliable.

There are also new ways of compromising organizations by attacking the AI system itself. Various vulnerabilities are already known. It is possible to influence the learning via the datasets used for training and model evolution, so producing different models that are potentially unsafe or simply misaligned and acting according to the will of threat actors.

But the vulnerability of AI systems won’t be limited to risks of data-poisoning or unsafe model shifts, we will also encounter the kinds of run-time software errors that have constantly undermined our computing systems since their inception. If the AI environment is compromised, then such errors will provide opportunities for attackers to take control of the local computer and use it as a platform from which to move throughout the wider business infrastructure. Ultimately, as with any other kind of digital system, the range of potential harms will be driven by the contexts of use. In the case of AI, this range is large and growing and will certainly include systems that are within our critical infrastructures and potentially even risking human life. They will reach into the influence of people and societies, potentially impacting human agency and democratic processes, as well as governance of sectors and business.

A growing cyber threat

Examples of AI-system vulnerability and risk are increasing and the cybersecurity profession is actively developing models aimed at underpinning techniques for countering such threats. But, there remains a significant capability gap with respect to our ability to protect our AI systems and the business processes they support.

Development is happening in a wider geopolitical and technological context. Current conflicts between nations are serving to energize a period of innovation and capacity-building in cyber-offensive, as well as defensive, techniques. We can expect this to filter through into other domains, such as cybercrime, in the near future. This will mean that we will face greater cyber-threats, with more skills and a growing ecosystem of threat actors.

The global investment in wider technologies and business models, such as the Internet-of-Things and cloud services, will bring about a significant opportunity for developing AI capability – in terms of access to algorithms, models and training datasets, and in AI-as-a-service offerings and solutions that make the technology more accessible and easier to use. This means that those who wish to attack our systems and economies are going to be AI-enabled.

We might currently view as separate the issues of ensuring that decisions recommended by the system are in alignment with our ethics or laws, from that of protecting the AI models from deliberate and covert manipulations by threat actors. But, in time, we can expect attack objectives to be a compromise of the AI system specifically so that it begins to output beyond acceptable and ethical practice, perhaps to extort ransom payments or to release a tainted model or dataset. Such risks are not simply in the realms of our imagination or SciFi channels, they are a direct extension of the kinds of threats we observe daily in cyberspace, in our businesses and throughout our supply chains. Whenever we observe a new technology trend, we can expect harm-potential to arise and so too opportunities for threats to monetize our use of technology.

The need to support effective oversight

The level of threat we face is growing and our dependence on digital technologies and services is creating systemic cyber risk. The aggregation of this remains partially hidden and difficult to predict and quantify. As we utilize AI technologies to inspire and deliver new generations of solutions for some of humankind’s most pressing challenges, there is surely a fiduciary, as well as an ethical, responsibility to ensure that our investments in this technology are not exposed to an unacceptable or unmanageable level of cyber risk. We can expect the cybersecurity profession to deliver ideas, practices and tools, but only if we ensure that there is market demand.

Do we know what we need? At the centre of the solution will be business and we will need leaders to play a role in moving us towards a safe and secure AI-enabled future. An obvious starting position for senior leadership is that of ensuring existing risk controls, those that are invested in, measured and are performing well, can extend to an enterprise model that uses such AI technology. Our insurance providers, investors, customers and regulators will be seeking such a position; we need to possess operational controls that both allow oversight and can be used to defend effectively against motivated threats.

This is non-trivial. There are gaps in existing practice that will be exacerbated by the use of AI, as we do not yet have the specialized cybersecurity solutions available.

• The effectiveness of cybersecurity controls and how to optimize orchestration are not well understood. This means cyber-risk exposure calculations may be inaccurate.

• Senior leadership often lacks digital intuition and the result can be a weak coupling between cybersecurity strategy and the wider business mission.

• Weak scrutiny in the main boardroom means a higher chance of surprise risks being realized, and poor preparation for costly cyber-incidents; similar challenges exist for those charged with oversight of critical national infrastructures or sectors.

How the Forum helps leaders understand cyber risk and strengthen digital resilience

The lack of AI-specialized cybersecurity solutions

One example (there are many more) is in the area of threat monitoring and detection. We have never been able to prevent all threats from entering our systems. Even if we could ensure that there were no vulnerable technologies presenting a viable attack surface for external threats (something any security professional would know not to assume), we will always be faced with people with valid access attacking us or selling such access credentials for third parties to use. This means that delivering an ability to detect a compromised system is essential, as otherwise, the reality is that we could be unknowingly using compromised AI to help us make decisions that impact people’s lives and livelihoods, our economies and critical infrastructures.

We do not currently have well-developed threat detection for AI systems. That is an unacceptable situation. How can leaders of nations, global or small businesses be effective in oversight and strategy if they cannot know their systems have lost integrity?

Even once we have the capacity to detect an attack on the AI system, we will need to deploy this alongside all other operational cybersecurity functions. This will require decisions to be taken on how to prioritize concerns being raised by tools and analysts; we simply don’t have the capacity to deal with every possible threat. A key aspect of any mature cyber-defence is the ability to be threat-led, so configuring our limited resources towards those risks determined to be most harmful. Where we are using AI, this will mean we also need to ensure that we can access specialized threat intelligence for AI-enabled threats and actors targeting our AI-enabled businesses. Crucial to success will be the sharing of experiences and threat insights with peers; we need to develop foresight, be able to anticipate threats and thus change our security postures and maintain cyber-resilience.

The importance of leadership

We need to promote organizational cultures that can speak to the concerns being raised around the use of Generative AI. A responsible approach will be open and transparent around its use, support communication with customers and stakeholders, promote care and make efforts to ensure that AI systems are strongly aligned with our values. We may even need to consider backup solutions if we cannot easily wind back the learning should we detect an attack – that might include an ability to switch it off.

Leaders of businesses using AI must insist on operational security capabilities being deployed. Where risks are potentially significant, then they may even need specialized risk assessments and residual cyber-value-at-risk calculations. Commissioning a table-top cyber-risk exercise for the senior leadership that incorporates compromise of AI technology and wider organizational business processes is essential for 2024.

In conclusion, for cybersecure AI systems and businesses, we will require unparalleled levels of dynamism, pace and adaptability. Strong leadership is important, as without it we cannot achieve the organizational pace or the momentum for adaption and resilience. Our ability to pivot and evolve our cyber-resilience depends entirely upon the strength of our core, a core whose DNA is created and evolved by leadership.

The AI Governance Alliance, comprising over 230 members, is committed to advocating for responsible global design, development and deployment of inclusive AI systems. It brings together experts from diverse sectors, uniting to shape the governance and responsible advancement of artificial intelligence. Dive into the cutting edge of AI thought leadership with our blog series, curated by esteemed members of the AI Governance Alliance Steering Committee as we navigate the complex challenges and opportunities in the ever-evolving AI landscape.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

Tech and Innovation

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Emerging TechnologiesSee all

Navin Chaddha

April 10, 2026