How COVID-19 revealed 3 critical AI procurement blindspots that could put lives at risk

Bridging procurement governance gaps will be key to harnessing AI’s potential without compromising privacy or public health. Image: Photo by Pietro Jeng on Unsplash

- The rush to procure AI technologies during COVID-19 has revealed key governance gaps.

- Such gaps, if not addressed, could lead to a range of problems, from incorrect diagnoses to eroded public trust.

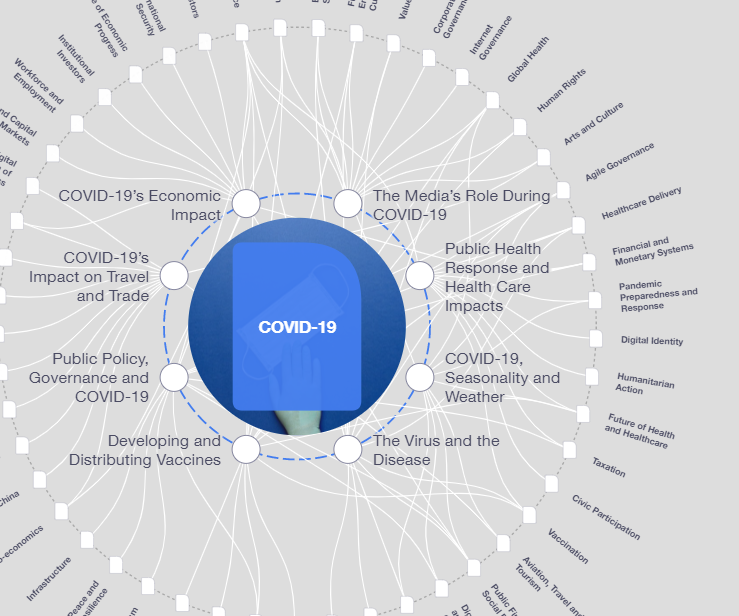

COVID-19’s global impact has shown the role interconnectedness plays in international cooperation and how outdated technologies are obstacles to effective policy making.

As a result, many entities and governments have found themselves rushing to catch up – identifying, evaluating, and procuring reliable solutions powered by artificial intelligence (AI).

Finding the right AI solutions is an urgent task complicated by the obligation of the public sector to ensure a process guided by fairness, accountability, and transparency (automated processes are especially vulnerable to exacerbating systemic racism and widening existing inequities).

Steps governments have already taken to procure AI have already exposed critical governance gaps. Bridging those gaps will be key to harnessing AI’s transformative potential and guiding economies through a recovery that does not sacrifice privacy and compromise public health.

What is the World Economic Forum doing about the coronavirus outbreak?

Here are a few examples of governance opportunities in AI procurement and use:

Chatbots

Many governments have integrated chatbots into their websites to prevent the volume of health questions from the public from overwhelming their resources. When inquiries from the general public overwhelm the staff of government agencies, the use of chatbots by government entities help manage customer service more quickly and more effectively. By responding to common questions and escalating the more complicated ones, significant time is saved and citizens needs are better met.

Still, many of these technologies lack tools and processes to minimize risks. Measures are typically not in place to ensure information accuracy, implement privacy protection or effectively train models agile enough to track the most current knowledge about this rapidly changing disease. This can result in ineffective information dissemination, which at best, does a disservice to the public. At worst, it leads to a misdiagnosis of symptoms, potentially putting thousands or more lives at risk. Governments must put into action plans that help resolve questions over whose voice to amplify and how accurate the information is that is being shared.

Diagnostics

Healthcare facilities around the world have been turning to AI systems to spot infections either through people’s voices or with chest x-rays. These tools have significantly helped overburdened hospitals manage the drastic increase in patients. Yet there is little guidance on how to evaluate how well the tools work. As a result, facilities risk making matters worse by incorrectly diagnosing patients.

Governance experts have urged hospitals not to weaken their regulatory protocols and to avoid long-term contracts without doing their due diligence. There is an immediate need for policy frameworks that operationalize the assessment of AI systems prior to being used. One recent report analysing dozens of computer models to diagnose and treat COVID-19 exposed how all models had been trained with unfit and insufficient data. Such assessments should force officials to ask critical questions about risk-mitigation processes, performance and accuracy, and ensure there is robust external oversight of systems through an independent third party.

Tracking the spread of the virus

Dozens of governments have proposed or deployed tracking tools that monitor the virus’ spread person to person. From Asia to Europe, a number of public sector entities have teamed up with the private sector to use AI-based services to monitor the spread of the virus. This includes an array of contact tracing apps that aim to accomplish what public officials have long done manually by interviewing those infected and one-by-one tracking down their recent contacts.

The privacy concerns over how this data may be used in different contexts and by whom suggest that asking the right questions now can help anticipate the inevitable data protection issues later. Establishing processes for thorough documentation can help to assess problems when they do occur and ideally help avoid the harms from taking place. One form of response gaining traction has been the promotion of internal evaluation tools in which companies embrace the multi-stakeholder approach to design an internal assessment questionnaire. Examples include IBM Research’s FactSheets, Google’s framework for internal algorithmic auditing, and Microsoft’s list of AI Principles and the tools to apply them internally. Over time, and if scaled appropriately, these approaches might ensure the responsible deployment of AI in a variety of applications.

"The window of opportunity is brief to establish a set of actionable procurement guidelines that enable good decision-making for the future."

”Looking ahead

Even before COVID-19, calls for regulation of AI had dramatically increased while the number of AI Ethics principles also proliferated. COVID-19 has accelerated the need to operationalize these principles. In order to help practitioners navigate these challenges, the World Economic Forum’s AI and Machine Learning Platform created the Procurement in a Box package, which aims to unlock public sector adoption of AI through government procurement. The tools released to the general public this week were developed after nearly two years of consultations with procurement officers and private sector companies, several rounds of solicitations of draft comment, comprehensive workshops, individual interviews and international pilots.

The Procurement in a Box effort seeks to operationalize many norm-setting proposals by helping shape the ethical and responsible use of technology. It prioritizes the practical application of these norms in multiple ways, including universalizing the commonalities across different jurisdictions while respecting the differences in law and culture, as well as stages of development of their AI innovation ecosystems.

Such tools will be increasingly relevant for countries globally at various levels, and timely in their utility. The Forum’s AI procurement initiative has notably taken shape at a time of flux, when the social contract of trust between the government, its citizens, and their industries is in suspension. The COVID-19 global crisis has underscored the need for responsibility in innovation and the ethical use of technology. As we embark on this Great Reset, the rules and standards of governance norms are not yet in place. The window of opportunity is brief to establish a set of actionable procurement guidelines that enable good decision-making for the future.

Don't miss any update on this topic

Create a free account and access your personalized content collection with our latest publications and analyses.

License and Republishing

World Economic Forum articles may be republished in accordance with the Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Public License, and in accordance with our Terms of Use.

The views expressed in this article are those of the author alone and not the World Economic Forum.

Stay up to date:

COVID-19

Forum Stories newsletter

Bringing you weekly curated insights and analysis on the global issues that matter.

More on Health and Healthcare SystemsSee all

Mansoor Al Mansoori and Noura Al Ghaithi

November 14, 2025